Checkpoints

Enable the Cloud Scheduler API

/ 10

Create a Cloud Storage bucket

/ 10

Make bucket public

/ 10

Create another cloud storage bucket

/ 10

Deploy cloud function

/ 30

Confirm the migration of bucket to Nearline.

/ 30

Optimizing Cost with Google Cloud Storage

- GSP649

- Overview

- Setup and requirements

- Architecture

- Task 1. Enable APIs and clone repository

- Task 2. Create cloud storage buckets and add a file

- Task 3. Create a monitoring dashboard

- Task 4. Generate load on the serving bucket

- Task 5. Review and deploy the Cloud Function

- Task 6. Test and validate alerting automation

- Congratulations!

GSP649

Overview

In this lab, you use Cloud Functions and Cloud Scheduler to identify and clean up wasted cloud resources. You trigger a Cloud Function to migrate a storage bucket from a Cloud Monitoring alerting policy to a less expensive storage class.

Google Cloud provides storage object lifecycle rules that automatically moves objects to different storage classes based on a set of attributes, such as their creation date or live state. However, these rules can’t take into account whether the objects have been accessed. Sometimes, you might want to move newer objects to Nearline storage if they haven’t been accessed for a certain period of time.

What you'll do

- Create two storage buckets, add a file to the

serving-bucket, and generate traffic against it. - Create a Cloud Monitoring dashboard to visualize bucket utilization.

- Deploy a Cloud Function to migrate the idle bucket to a less expensive storage class, and trigger the function by using a payload intended to mock a notification received from a Cloud alerting policy.

Setup and requirements

In this section, you configure the infrastructure and identities required to complete the lab.

Before you click the Start Lab button

Read these instructions. Labs are timed and you cannot pause them. The timer, which starts when you click Start Lab, shows how long Google Cloud resources will be made available to you.

This hands-on lab lets you do the lab activities yourself in a real cloud environment, not in a simulation or demo environment. It does so by giving you new, temporary credentials that you use to sign in and access Google Cloud for the duration of the lab.

To complete this lab, you need:

- Access to a standard internet browser (Chrome browser recommended).

- Time to complete the lab---remember, once you start, you cannot pause a lab.

How to start your lab and sign in to the Google Cloud console

-

Click the Start Lab button. If you need to pay for the lab, a pop-up opens for you to select your payment method. On the left is the Lab Details panel with the following:

- The Open Google Cloud console button

- Time remaining

- The temporary credentials that you must use for this lab

- Other information, if needed, to step through this lab

-

Click Open Google Cloud console (or right-click and select Open Link in Incognito Window if you are running the Chrome browser).

The lab spins up resources, and then opens another tab that shows the Sign in page.

Tip: Arrange the tabs in separate windows, side-by-side.

Note: If you see the Choose an account dialog, click Use Another Account. -

If necessary, copy the Username below and paste it into the Sign in dialog.

{{{user_0.username | "Username"}}} You can also find the Username in the Lab Details panel.

-

Click Next.

-

Copy the Password below and paste it into the Welcome dialog.

{{{user_0.password | "Password"}}} You can also find the Password in the Lab Details panel.

-

Click Next.

Important: You must use the credentials the lab provides you. Do not use your Google Cloud account credentials. Note: Using your own Google Cloud account for this lab may incur extra charges. -

Click through the subsequent pages:

- Accept the terms and conditions.

- Do not add recovery options or two-factor authentication (because this is a temporary account).

- Do not sign up for free trials.

After a few moments, the Google Cloud console opens in this tab.

Activate Cloud Shell

Cloud Shell is a virtual machine that is loaded with development tools. It offers a persistent 5GB home directory and runs on the Google Cloud. Cloud Shell provides command-line access to your Google Cloud resources.

- Click Activate Cloud Shell

at the top of the Google Cloud console.

When you are connected, you are already authenticated, and the project is set to your Project_ID,

gcloud is the command-line tool for Google Cloud. It comes pre-installed on Cloud Shell and supports tab-completion.

- (Optional) You can list the active account name with this command:

- Click Authorize.

Output:

- (Optional) You can list the project ID with this command:

Output:

gcloud, in Google Cloud, refer to the gcloud CLI overview guide.

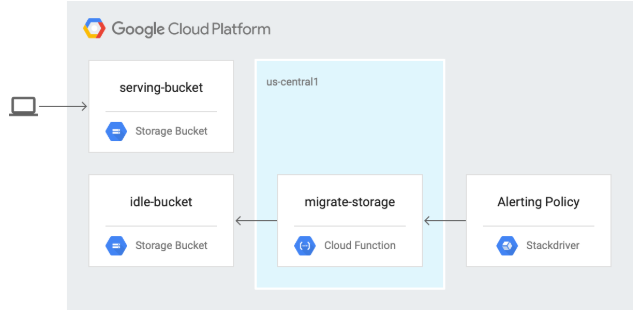

Architecture

In the following diagram, you trigger a Cloud Function to migrate a storage bucket to a less expensive storage class from a Cloud Monitoring alerting policy.

Task 1. Enable APIs and clone repository

-

In Cloud Shell, enable the Cloud Scheduler API:

gcloud services enable cloudscheduler.googleapis.com Click Check my progress to verify the objective.

-

Clone the repository:

git clone https://github.com/GoogleCloudPlatform/gcf-automated-resource-cleanup.git && cd gcf-automated-resource-cleanup/ -

Set environment variables and make the repository folder your $WORKDIR where you run all commands related to this lab:

export PROJECT_ID=$(gcloud config list --format 'value(core.project)' 2>/dev/null) WORKDIR=$(pwd) -

Install Apache Bench, an open source load-generation tool:

sudo apt-get update sudo apt-get install apache2-utils -y

Task 2. Create cloud storage buckets and add a file

-

In Cloud Shell, navigate to the

migrate-storagedirectory:cd $WORKDIR/migrate-storage -

Create

serving-bucket, the Cloud Storage bucket. You use this later to change storage classes:export PROJECT_ID=$(gcloud config list --format 'value(core.project)' 2>/dev/null) gcloud storage buckets create gs://${PROJECT_ID}-serving-bucket -l {{{project_0.default_region|REGION}}}

Click Check my progress to verify the objective.

-

Make the bucket public:

gsutil acl ch -u allUsers:R gs://${PROJECT_ID}-serving-bucket -

Add a text file to the bucket:

gcloud storage cp $WORKDIR/migrate-storage/testfile.txt gs://${PROJECT_ID}-serving-bucket -

Make the file public:

gsutil acl ch -u allUsers:R gs://${PROJECT_ID}-serving-bucket/testfile.txt -

Confirm that you’re able to access the file:

curl http://storage.googleapis.com/${PROJECT_ID}-serving-bucket/testfile.txt Your output will be:

this is a test

Click Check my progress to verify the objective.

-

Create a second bucket called idle-bucket that won’t serve any data:

gcloud storage buckets create gs://${PROJECT_ID}-idle-bucket -l {{{project_0.default_region|REGION}}}

Click Check my progress to verify the objective.

Task 3. Create a monitoring dashboard

Create a Monitoring Metrics Scope

Set up a Monitoring Metrics Scope that's tied to your Google Cloud Project. The following steps create a new account that has a free trial of Monitoring.

- In the Cloud Console, click Navigation menu (

) > View All Products > Monitoring.

When the Monitoring Overview page opens, your metrics scope project is ready.

-

In the left panel, click Dashboards > +Create Dashboard.

-

Name the Dashboard

Bucket Usage. -

Click +ADD WIDGET.

-

Click Line Chart.

-

For Widget Title, type

Bucket Access. -

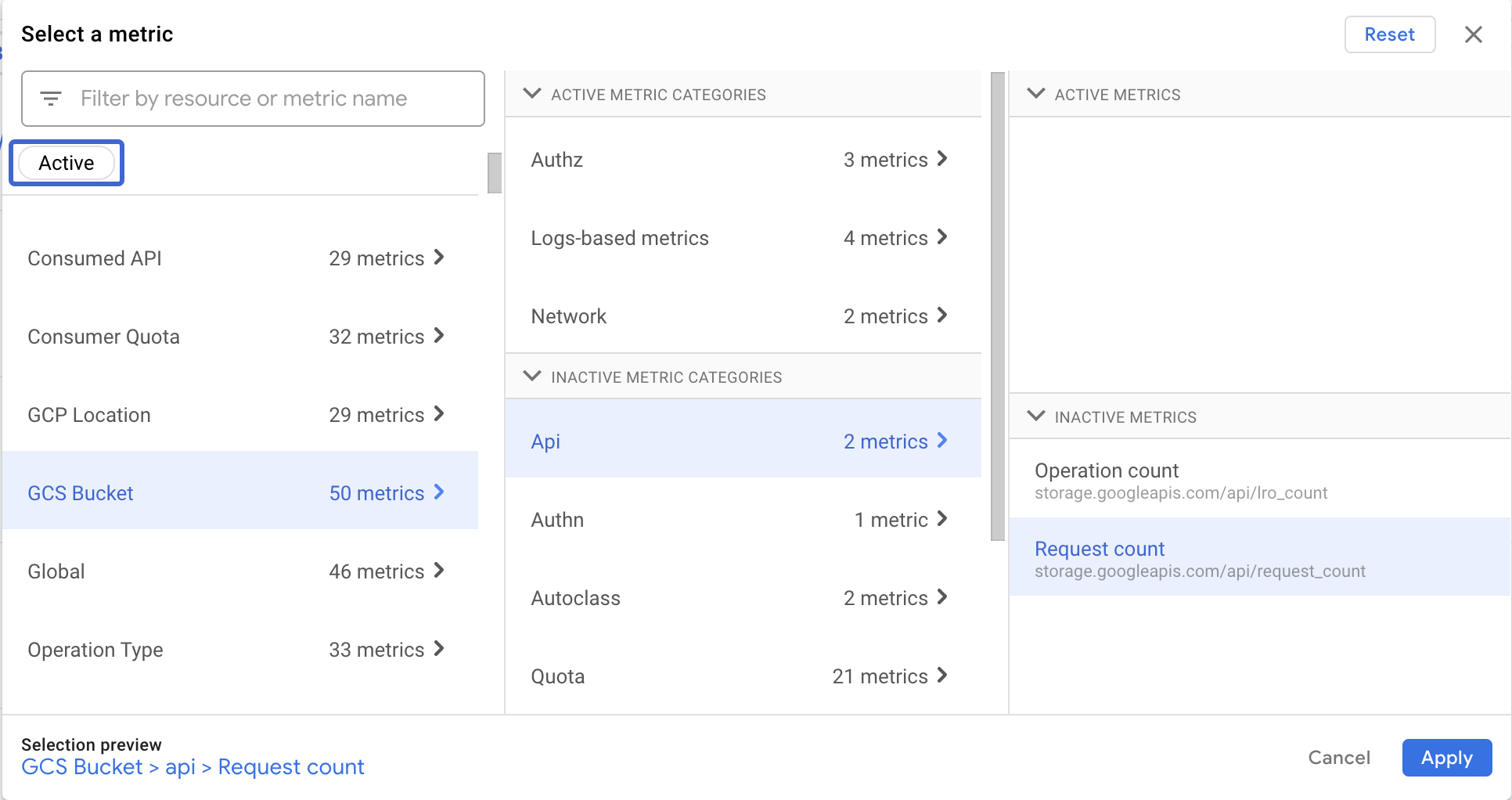

For select a metric > GCS Bucket > Api > Request count metric and click Apply.

- Click + Add Filter.

To filter by the method name:

- For Metric Labels, select method.

- For Comparator, select =(equals).

- For Value, select ReadObject.

- Click Apply.

- To group the metrics by bucket name, in the Group By drop-down list, select bucket_name and click ok.

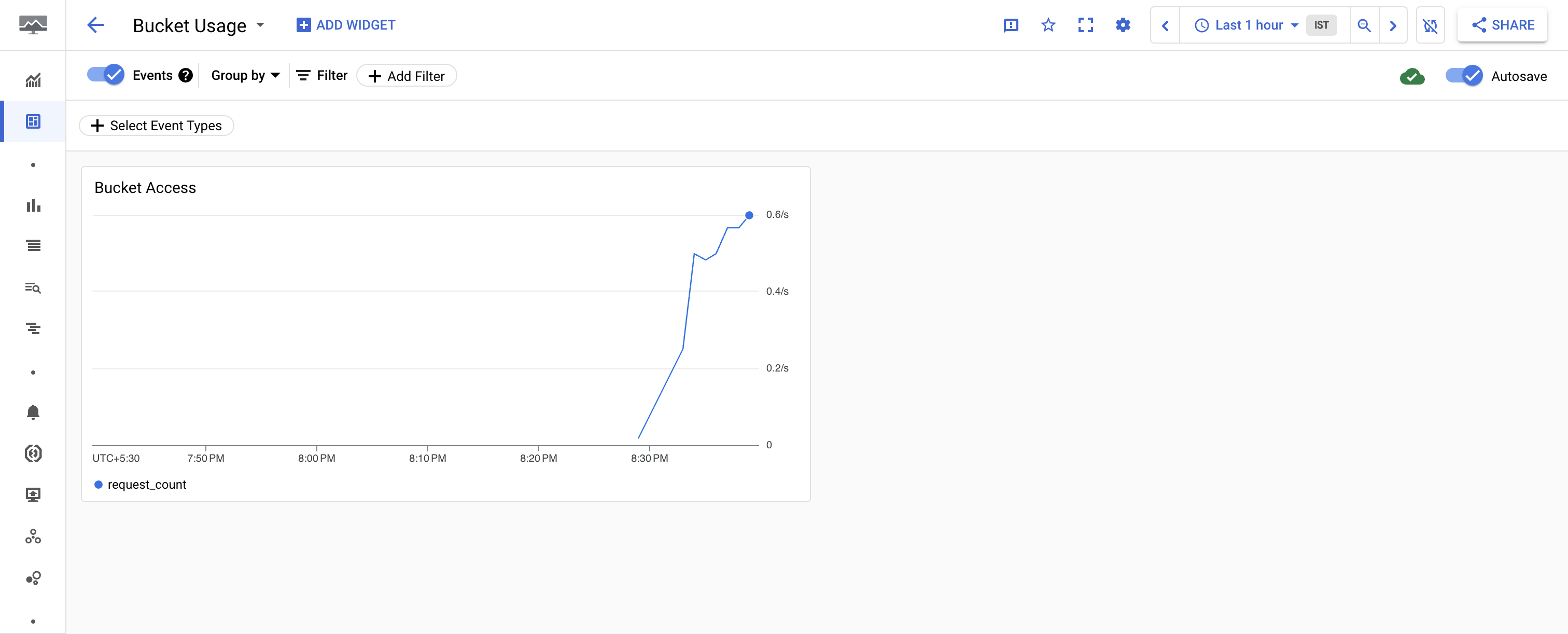

You’ve configured Cloud Monitoring to observe object access in your buckets. There's no data in the chart because there's no traffic to the Cloud Storage buckets.

Task 4. Generate load on the serving bucket

Now that you configured monitoring, use Apache Bench to send traffic to serving-bucket.

- In Cloud Shell, send requests to the object in the serving bucket:

- In the left panel, click Dashboards and then click the name of your Dashboard that is Bucket Usage to see the Bucket Access chart.

- View traffic details.

You may need to enter CTRL-C to return to the command prompt.

Task 5. Review and deploy the Cloud Function

-

In Cloud Shell, enter the following command to view the Cloud Function code that migrates a storage bucket to the Nearline storage class:

cat $WORKDIR/migrate-storage/main.py | grep "migrate_storage(" -A 15 The output is:

def migrate_storage(request): # process incoming request to get the bucket to be migrated: request_json = request.get_json(force=True) # bucket names are globally unique bucket_name = request_json['incident']['resource_name'] # create storage client storage_client = storage.Client() # get bucket bucket = storage_client.get_bucket(bucket_name) # update storage class bucket.storage_class = "NEARLINE" bucket.patch() Notice that the Cloud Function uses the bucket name passed in the request to change it's storage class to Nearline.

-

Disable the Cloud Functions API:

gcloud services disable cloudfunctions.googleapis.com -

Re-enable the Cloud Functions API:

gcloud services enable cloudfunctions.googleapis.com -

Add the

artifactregistry.readerpermission for your appspot service account:gcloud projects add-iam-policy-binding $PROJECT_ID \ --member="serviceAccount:$PROJECT_ID@appspot.gserviceaccount.com" \ --role="roles/artifactregistry.reader" -

Deploy the Cloud Function:

gcloud functions deploy migrate_storage --trigger-http --runtime=python37 --region {{{project_0.default_region | Region}}} When prompted, enter

Yto allow unauthenticated invocations. -

Capture the trigger URL into an environment variable that you use in the next section:

export FUNCTION_URL=$(gcloud functions describe migrate_storage --format=json --region {{{project_0.default_region | Region}}} | jq -r '.httpsTrigger.url')

Click Check my progress to verify the objective.

Task 6. Test and validate alerting automation

-

Set the idle bucket name:

export IDLE_BUCKET_NAME=$PROJECT_ID-idle-bucket -

Send a test notification to the Cloud Function you deployed using the

incident.jsonfile:envsubst < $WORKDIR/migrate-storage/incident.json | curl -X POST -H "Content-Type: application/json" $FUNCTION_URL -d @- The output is:

OK The output isn’t terminated with a newline and therefore is immediately followed by the command prompt.

-

Confirm that the idle bucket was migrated to Nearline:

gsutil defstorageclass get gs://$PROJECT_ID-idle-bucket The output is:

gs://-idle-bucket: NEARLINE

Click Check my progress to verify the objective.

Congratulations!

You completed the following tasks:

- Created two Cloud Storage buckets.

- Added an object to one of the buckets.

- Configured Cloud Monitoring to observe bucket object access.

- Reviewed the Cloud Function code that migrates objects from a Regional bucket to a Nearline bucket.

- Deployed the Cloud Function.

- Tested the Cloud Function by using a Cloud Monitoring alert.

Google Cloud training and certification

...helps you make the most of Google Cloud technologies. Our classes include technical skills and best practices to help you get up to speed quickly and continue your learning journey. We offer fundamental to advanced level training, with on-demand, live, and virtual options to suit your busy schedule. Certifications help you validate and prove your skill and expertise in Google Cloud technologies.

Manual Last Updated July 24, 2024

Lab Last Tested July 24, 2024

Copyright 2024 Google LLC All rights reserved. Google and the Google logo are trademarks of Google LLC. All other company and product names may be trademarks of the respective companies with which they are associated.