Checkpoints

Create a Dataset

/ 20

Create a sink

/ 20

Run example queries

/ 30

Viewing the logs in BigQuery

/ 30

Using BigQuery and Cloud Logging to Analyze BigQuery Usage

GSP617

Overview

Cloud Logging serves as a central repository for logs from various Google Cloud services, including BigQuery, and is ideal for short to mid-term log storage. Many industries require logs to be retained for extended periods. To keep logs for extended historical analysis or complex auditing, you can set up a sink to export specific logs to BigQuery.

In this lab you view the BigQuery logs inside Cloud Logging, set up a sink to export them into BigQuery, and then use SQL to analyze the logs.

Setup and requirements

Before you click the Start Lab button

Read these instructions. Labs are timed and you cannot pause them. The timer, which starts when you click Start Lab, shows how long Google Cloud resources will be made available to you.

This hands-on lab lets you do the lab activities yourself in a real cloud environment, not in a simulation or demo environment. It does so by giving you new, temporary credentials that you use to sign in and access Google Cloud for the duration of the lab.

To complete this lab, you need:

- Access to a standard internet browser (Chrome browser recommended).

- Time to complete the lab---remember, once you start, you cannot pause a lab.

How to start your lab and sign in to the Google Cloud console

-

Click the Start Lab button. If you need to pay for the lab, a pop-up opens for you to select your payment method. On the left is the Lab Details panel with the following:

- The Open Google Cloud console button

- Time remaining

- The temporary credentials that you must use for this lab

- Other information, if needed, to step through this lab

-

Click Open Google Cloud console (or right-click and select Open Link in Incognito Window if you are running the Chrome browser).

The lab spins up resources, and then opens another tab that shows the Sign in page.

Tip: Arrange the tabs in separate windows, side-by-side.

Note: If you see the Choose an account dialog, click Use Another Account. -

If necessary, copy the Username below and paste it into the Sign in dialog.

{{{user_0.username | "Username"}}} You can also find the Username in the Lab Details panel.

-

Click Next.

-

Copy the Password below and paste it into the Welcome dialog.

{{{user_0.password | "Password"}}} You can also find the Password in the Lab Details panel.

-

Click Next.

Important: You must use the credentials the lab provides you. Do not use your Google Cloud account credentials. Note: Using your own Google Cloud account for this lab may incur extra charges. -

Click through the subsequent pages:

- Accept the terms and conditions.

- Do not add recovery options or two-factor authentication (because this is a temporary account).

- Do not sign up for free trials.

After a few moments, the Google Cloud console opens in this tab.

Activate Cloud Shell

Cloud Shell is a virtual machine that is loaded with development tools. It offers a persistent 5GB home directory and runs on the Google Cloud. Cloud Shell provides command-line access to your Google Cloud resources.

- Click Activate Cloud Shell

at the top of the Google Cloud console.

When you are connected, you are already authenticated, and the project is set to your Project_ID,

gcloud is the command-line tool for Google Cloud. It comes pre-installed on Cloud Shell and supports tab-completion.

- (Optional) You can list the active account name with this command:

- Click Authorize.

Output:

- (Optional) You can list the project ID with this command:

Output:

gcloud, in Google Cloud, refer to the gcloud CLI overview guide.

Task 1. Open BigQuery

Open the BigQuery console

- In the Google Cloud Console, select Navigation menu > BigQuery.

The Welcome to BigQuery in the Cloud Console message box opens. This message box provides a link to the quickstart guide and the release notes.

- Click Done.

The BigQuery console opens.

Task 2. Create a dataset

-

Under the Explorer section, click on the three dots next to the project that starts with

qwiklabs-gcp-. -

Click Create dataset.

-

Set Dataset ID to bq_logs.

-

Click CREATE DATASET.

Click Check my progress to verify the objective.

Task 3. Run a query

First, run a simple query which generates a log. Later you will use this log to set up the log export from to BigQuery.

- Copy and paste the following query into the BigQuery Query editor:

- Click RUN.

Task 4. Set up log export from Cloud Logging

- In the Cloud console, select Navigation menu > View All Products > Logging > Logs Explorer.

-

In All resources, select BigQuery, then click Apply.

-

Now, click Run query button in the top right.

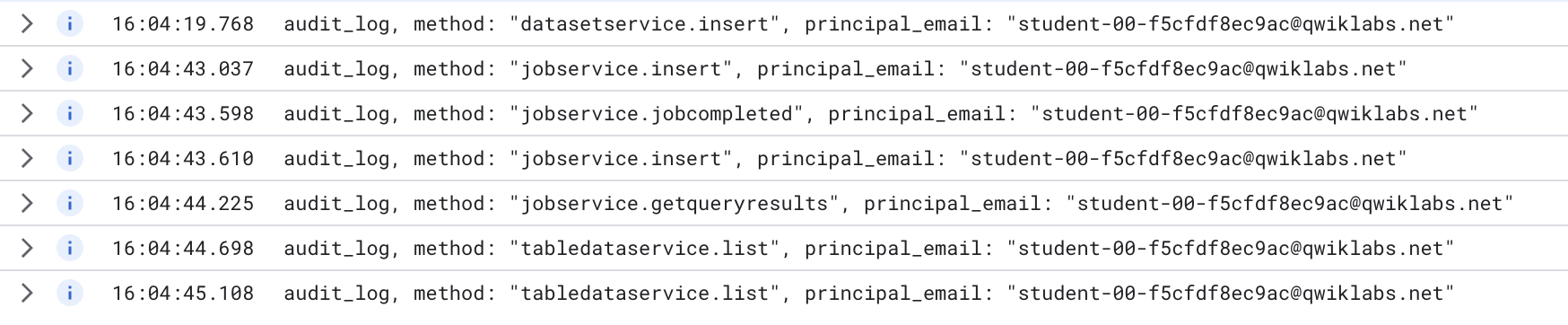

A few log entries from the query should appear.

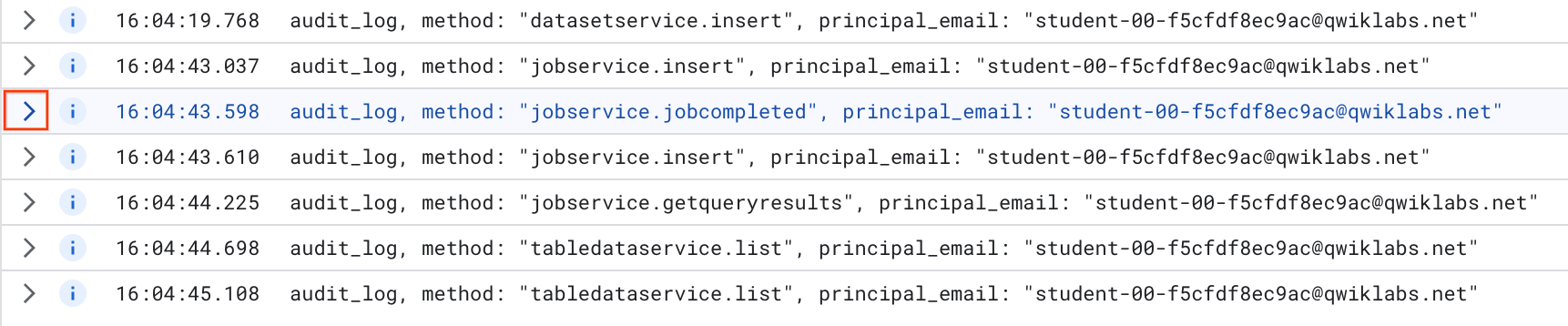

Look for the entry that contains the word "jobcompleted".

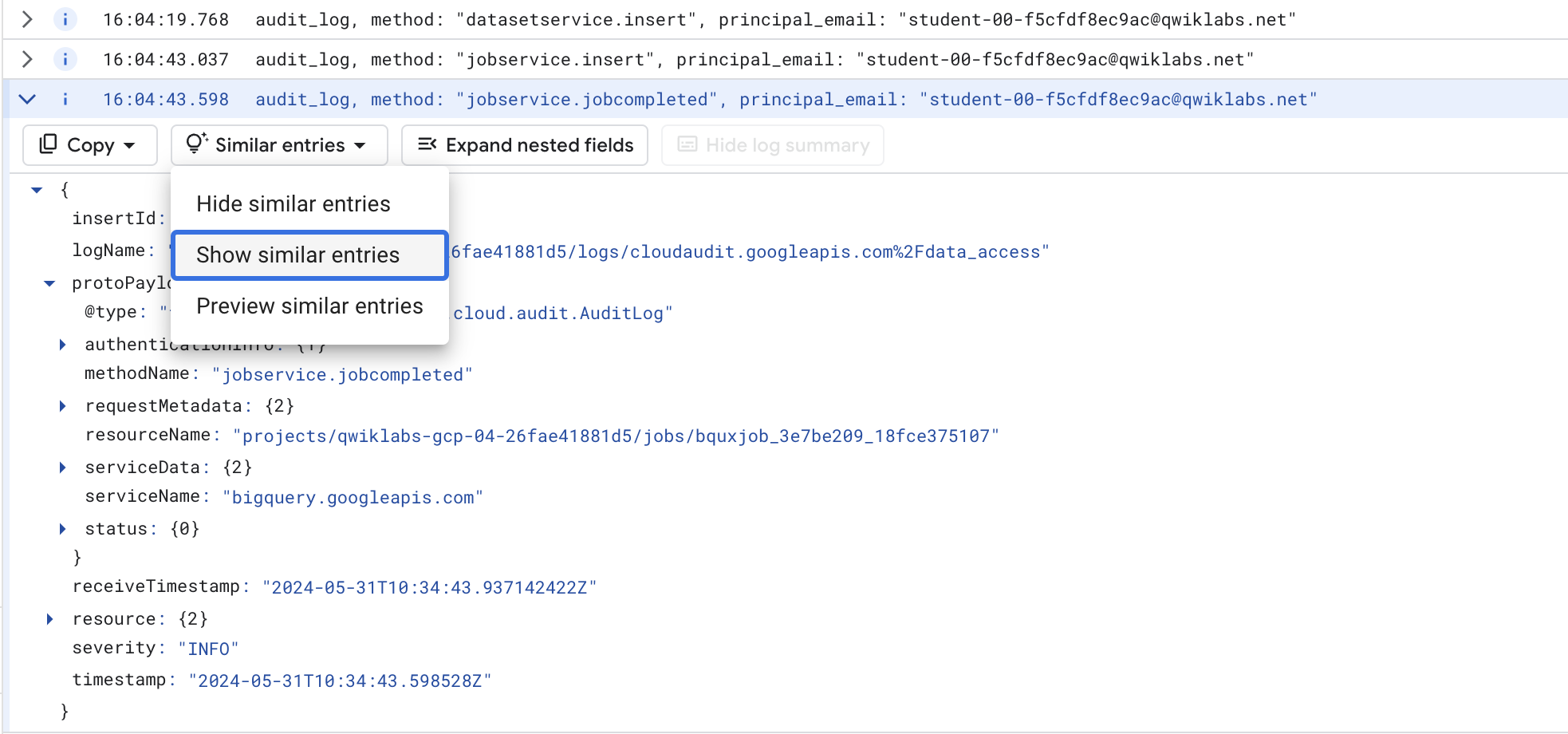

- Click on the arrow on the left to expand the entry.

Then click on Expand nested fields button on the right hand side.

This shows the full JSON log entry. Scroll down and have a look at the different fields.

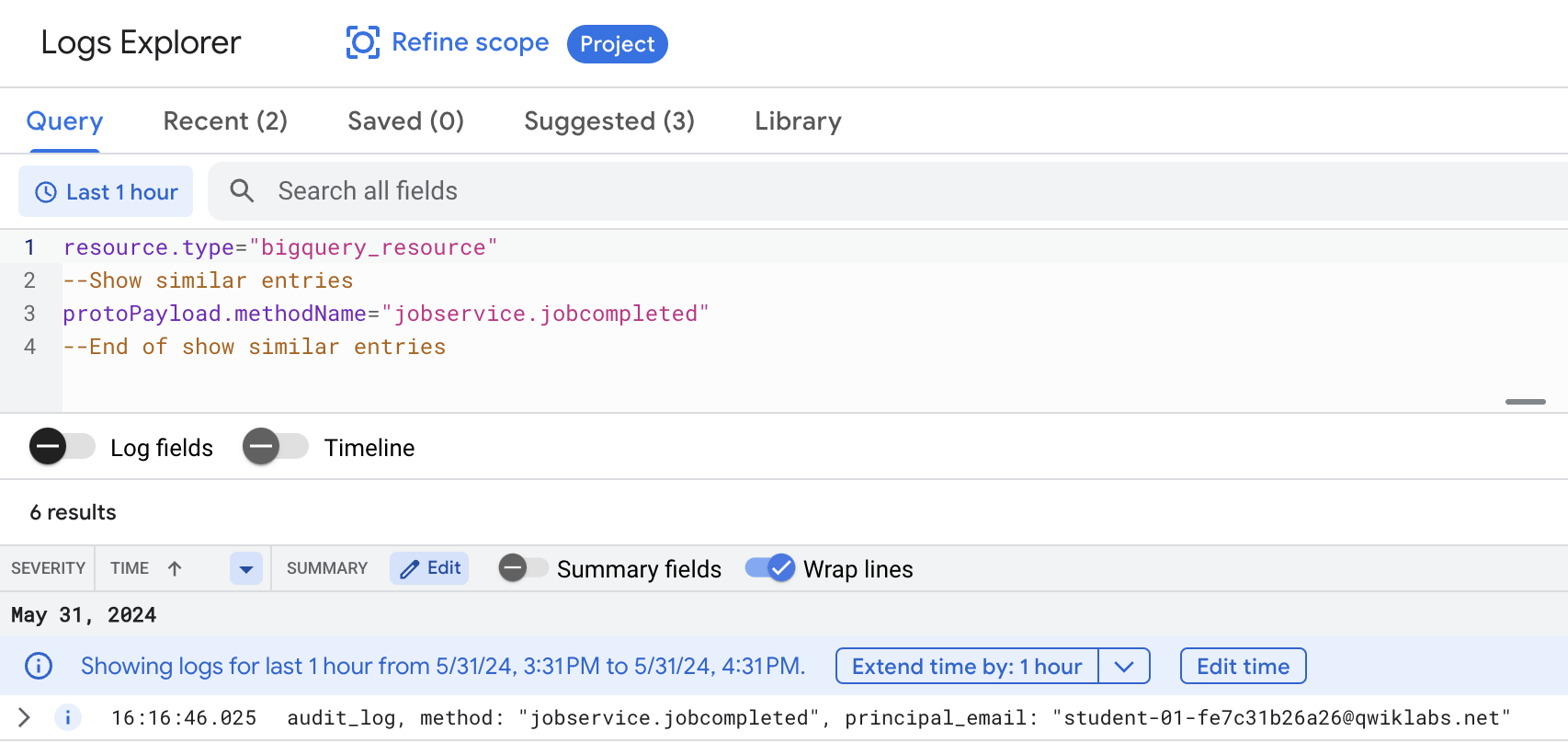

- Scroll back up to the header of the entry, click on Similar entries button and choose Show similar entries.

This sets up the search with the correct terms. You may need to toggle the Show Query button to see it.

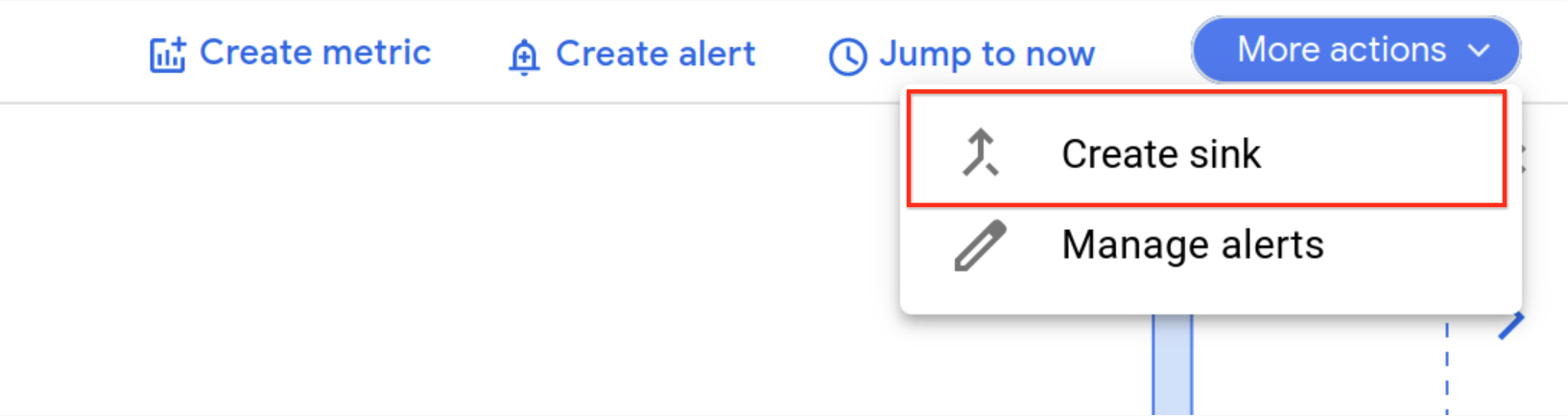

Create sink

Now that you have the logs you need, time to set up a sink.

- Click Create sink from the More actions drop-down.

- Fill in the fields as follows:

- Sink name: JobComplete and click NEXT.

- Select sink service: BigQuery dataset

- Select Bigquery dataset (Destination): bq_logs (The dataset you setup previously)

- Leave the rest of the options at the default settings.

- Click CREATE SINK.

Any subsequent log entries from BigQuery are now exported to a table in the bq_logs dataset.

Click Check my progress to verify the objective.

Task 5. Run example queries

To populate your new table with some logs, run some example queries.

- Navigate to Cloud Shell, then add each of the following BigQuery commands into Cloud Shell:

You should see the results of each query returned.

Click Check my progress to verify the objective.

Task 6. Viewing the logs in BigQuery

-

Navigate back to BigQuery (Navigation menu > BigQuery).

-

Expand your resource starting with the name qwiklabs-gcp- and expand your dataset bq_logs.

The name may vary, but you should see a "cloudaudit_googleapis_com_data_access" table.

- Click on the table name, then inspect the schema of the table and notice it has a very large number of fields.

If you clicked Preview and wondered why it doesn't show the logs for the recently run queries, its because the logs are streamed into the table, which means that the new data can be queried but won't show up in Preview for a little while.

To make the table more usable, create a VIEW, which pulls out subset of fields and also performs some calculations to derive a metric for query time.

- Click Compose New Query. In the BigQuery query EDITOR, run the following SQL after replacing with the name of your project (the Project ID can be easily copied from the Lab Details panel on the left side of the lab page):

Click Check my progress to verify the objective.

- Now query the VIEW. Compose a new query, and run the following command:

- Scroll through the results of the executed queries.

Congratulations!

You successfully exported BigQuery logs from Cloud Logging to a BigQuery table, then analyzed them with SQL.

Next steps / Learn more

- Read through the Cloud Logging documentation.

- Read through the BigQuery documentation.

Google Cloud training and certification

...helps you make the most of Google Cloud technologies. Our classes include technical skills and best practices to help you get up to speed quickly and continue your learning journey. We offer fundamental to advanced level training, with on-demand, live, and virtual options to suit your busy schedule. Certifications help you validate and prove your skill and expertise in Google Cloud technologies.

Manual Last Updated: May 31, 2024

Lab Last Tested: May 31, 2024

Copyright 2024 Google LLC All rights reserved. Google and the Google logo are trademarks of Google LLC. All other company and product names may be trademarks of the respective companies with which they are associated.