Checkpoints

Create a Dataproc cluster

/ 50

Submit a job

/ 30

Update a cluster

/ 20

Dataproc: Qwik Start - Console

GSP103

Overview

Dataproc is a fast, easy-to-use, fully-managed cloud service for running Apache Spark and Apache Hadoop clusters in a simpler, more cost-efficient way. Operations that used to take hours or days take seconds or minutes instead. Create Dataproc clusters quickly and resize them at any time, so you don't have to worry about your data pipelines outgrowing your clusters.

This lab shows you how to use the Google Cloud console to create a Dataproc cluster, run a simple Apache Spark job in the cluster, and then modify the number of workers in the cluster.

What you'll do

In this lab, you learn how to:

- Create a Dataproc cluster in the Google Cloud console

- Run a simple Apache Spark job

- Modify the number of workers in the cluster

Setup and requirements

Before you click the Start Lab button

Read these instructions. Labs are timed and you cannot pause them. The timer, which starts when you click Start Lab, shows how long Google Cloud resources will be made available to you.

This hands-on lab lets you do the lab activities yourself in a real cloud environment, not in a simulation or demo environment. It does so by giving you new, temporary credentials that you use to sign in and access Google Cloud for the duration of the lab.

To complete this lab, you need:

- Access to a standard internet browser (Chrome browser recommended).

- Time to complete the lab---remember, once you start, you cannot pause a lab.

How to start your lab and sign in to the Google Cloud console

-

Click the Start Lab button. If you need to pay for the lab, a pop-up opens for you to select your payment method. On the left is the Lab Details panel with the following:

- The Open Google Cloud console button

- Time remaining

- The temporary credentials that you must use for this lab

- Other information, if needed, to step through this lab

-

Click Open Google Cloud console (or right-click and select Open Link in Incognito Window if you are running the Chrome browser).

The lab spins up resources, and then opens another tab that shows the Sign in page.

Tip: Arrange the tabs in separate windows, side-by-side.

Note: If you see the Choose an account dialog, click Use Another Account. -

If necessary, copy the Username below and paste it into the Sign in dialog.

{{{user_0.username | "Username"}}} You can also find the Username in the Lab Details panel.

-

Click Next.

-

Copy the Password below and paste it into the Welcome dialog.

{{{user_0.password | "Password"}}} You can also find the Password in the Lab Details panel.

-

Click Next.

Important: You must use the credentials the lab provides you. Do not use your Google Cloud account credentials. Note: Using your own Google Cloud account for this lab may incur extra charges. -

Click through the subsequent pages:

- Accept the terms and conditions.

- Do not add recovery options or two-factor authentication (because this is a temporary account).

- Do not sign up for free trials.

After a few moments, the Google Cloud console opens in this tab.

Confirm Cloud Dataproc API is enabled

To create a Dataproc cluster in Google Cloud, the Cloud Dataproc API must be enabled. To confirm the API is enabled:

-

Click Navigation menu > APIs & Services > Library:

-

Type Cloud Dataproc in the Search for APIs & Services dialog. The console will display the Cloud Dataproc API in the search results.

-

Click on Cloud Dataproc API to display the status of the API. If the API is not already enabled, click the Enable button.

Once the API is enabled, proceed with the lab instructions.

Permission to Service Account

To assign storage permission to the service account, which is required for creating a cluster:

-

Go to Navigation menu > IAM & Admin > IAM.

-

Click the pencil icon on the

compute@developer.gserviceaccount.comservice account. -

click on the + ADD ANOTHER ROLE button. select role Storage Admin

Once you've selected the Storage Admin role, click on Save

Task 1. Create a cluster

-

In the Cloud Platform Console, select Navigation menu > Dataproc > Clusters, then click Create cluster.

-

Click Create for Cluster on Compute Engine.

-

Set the following fields for your cluster and accept the default values for all other fields:

| Field | Value |

|---|---|

| Name | example-cluster |

| Region | |

| Zone | |

| Machine Series | E2 |

| Machine Type | e2-standard-2 |

| Number of Worker Nodes | 2 |

| Primary disk size | 30 GB |

| Internal IP only | Deselect "Configure all instances to have only internal IP addresses" |

us-central1 or europe-west1, to isolate resources (including VM instances and Cloud Storage) and metadata storage locations utilized by Cloud Dataproc within the user-specified region.

- Click Create to create the cluster.

Your new cluster will appear in the Clusters list. It may take a few minutes to create, the cluster Status shows as Provisioning until the cluster is ready to use, then changes to Running.

Test completed task

Click Check my progress to verify your performed task.

Task 2. Submit a job

To run a sample Spark job:

-

Click Jobs in the left pane to switch to Dataproc's jobs view, then click Submit job.

-

Set the following fields to update Job. Accept the default values for all other fields:

| Field | Value |

|---|---|

| Region | |

| Cluster | example-cluster |

| Job type | Spark |

| Main class or jar | org.apache.spark.examples.SparkPi |

| Jar files | file:///usr/lib/spark/examples/jars/spark-examples.jar |

| Arguments | 1000 (This sets the number of tasks.) |

- Click Submit.

Your job should appear in the Jobs list, which shows your project's jobs with its cluster, type, and current status. Job status displays as Running, and then Succeeded after it completes.

Test completed task

Click Check my progress to verify your performed task.

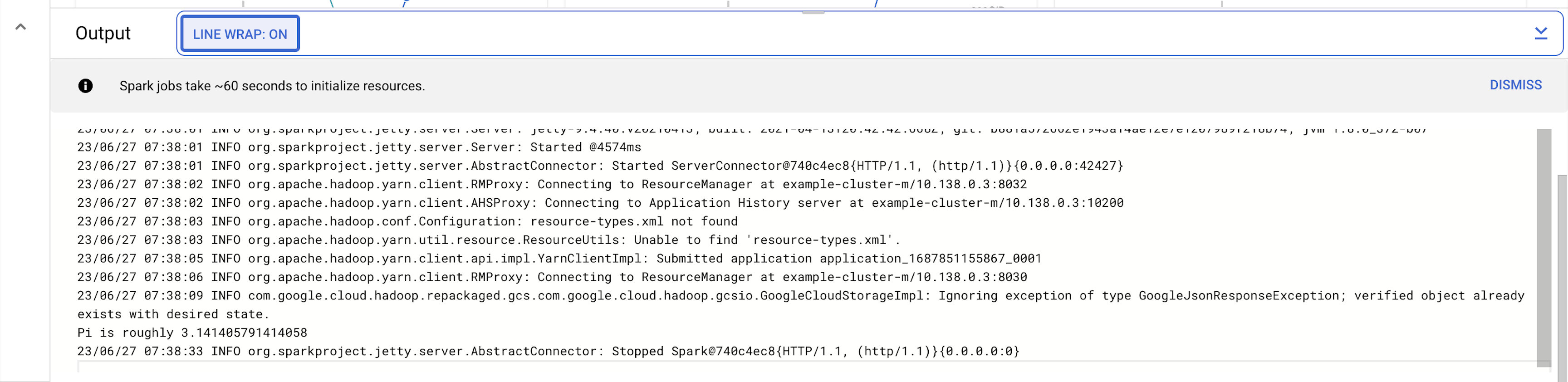

Task 3. View the job output

To see your completed job's output:

-

Click the job ID in the Jobs list.

-

Select LINE WRAP to

ONor scroll all the way to the right to see the calculated value of Pi. Your output, with LINE WRAPON, should look something like this:

Your job has successfully calculated a rough value for pi!

Task 4. Update a cluster to modify the number of workers

To change the number of worker instances in your cluster:

-

Select Clusters in the left navigation pane to return to the Dataproc Clusters view.

-

Click example-cluster in the Clusters list. By default, the page displays an overview of your cluster's CPU usage.

-

Click Configuration to display your cluster's current settings.

-

Click Edit. The number of worker nodes is now editable.

-

Enter 4 in the Worker nodes field.

-

Click Save.

Your cluster is now updated. Check out the number of VM instances in the cluster.

Test completed task

Click Check my progress to verify your performed task.

-

To rerun the job with the updated cluster, you would click Jobs in the left pane, then click SUBMIT JOB.

-

Set the same fields you set in the Submit a job section:

| Field | Value |

|---|---|

| Region | |

| Cluster | example-cluster |

| Job type | Spark |

| Main class or jar | org.apache.spark.examples.SparkPi |

| Jar files | file:///usr/lib/spark/examples/jars/spark-examples.jar |

| Arguments | 1000 (This sets the number of tasks.) |

- Click Submit.

Task 5. Test your understanding

Below are multiple-choice questions to reinforce your understanding of this lab's concepts. Answer them to the best of your abilities.

Congratulations!

Now you know how to use the Google Cloud console to create and update a Dataproc cluster and then submit a job in that cluster.

Next steps / Learn more

This lab is also part of a series of labs called Qwik Starts. These labs are designed to give you a little taste of the many features available with Google Cloud. Search for "Qwik Starts" in the lab catalog to find the next lab you'd like to take!

Google Cloud training and certification

...helps you make the most of Google Cloud technologies. Our classes include technical skills and best practices to help you get up to speed quickly and continue your learning journey. We offer fundamental to advanced level training, with on-demand, live, and virtual options to suit your busy schedule. Certifications help you validate and prove your skill and expertise in Google Cloud technologies.

Manual Last Updated March 21, 2024

Lab Last Tested March 21, 2024

Copyright 2024 Google LLC All rights reserved. Google and the Google logo are trademarks of Google LLC. All other company and product names may be trademarks of the respective companies with which they are associated.