GSP416

Übersicht

BigQuery ist eine vollständig verwaltete, managementfreie und kostengünstige Analysedatenbank von Google. Mit diesem Tool können Sie mehrere Terabyte an Daten abfragen und müssen dabei weder eine Infrastruktur verwalten, noch benötigen Sie einen Datenbankadministrator. BigQuery basiert auf SQL und kann als „Pay as you go“-Modell genutzt werden. Mithilfe von BigQuery können Sie sich auf die Datenanalyse konzentrieren und wichtige Informationen erhalten.

In diesem Lab arbeiten Sie mit semistrukturierten Daten (Aufnahme von JSON-Daten bzw. Array-Datentypen) in BigQuery. Durch das Denormalisieren eines Schemas in eine einzelne Tabelle mit verschachtelten und wiederkehrenden Feldern lassen sich Leistungsverbesserungen erzielen. Die SQL-Syntax kann jedoch kompliziert sein, wenn mit Array-Daten gearbeitet wird. Sie üben, wie Sie verschiedene semistrukturierte Datasets laden und abfragen, Probleme beheben und Verschachtelungen aufheben.

Aufgaben

Aufgaben in diesem Lab:

- Semistrukturierte Daten laden und abfragen sowie Verschachtelungen aufheben

- Fehler bei Abfragen zu semistrukturierten Daten korrigieren

Einrichtung und Anforderungen

Vor dem Klick auf „Start Lab“ (Lab starten)

Lesen Sie diese Anleitung. Labs sind zeitlich begrenzt und können nicht pausiert werden. Der Timer beginnt zu laufen, wenn Sie auf Lab starten klicken, und zeigt Ihnen, wie lange Google Cloud-Ressourcen für das Lab verfügbar sind.

In diesem praxisorientierten Lab können Sie die Lab-Aktivitäten in einer echten Cloud-Umgebung durchführen – nicht in einer Simulations- oder Demo-Umgebung. Dazu erhalten Sie neue, temporäre Anmeldedaten, mit denen Sie für die Dauer des Labs auf Google Cloud zugreifen können.

Für dieses Lab benötigen Sie Folgendes:

- Einen Standardbrowser (empfohlen wird Chrome)

Hinweis: Nutzen Sie den privaten oder Inkognitomodus (empfohlen), um dieses Lab durchzuführen. So wird verhindert, dass es zu Konflikten zwischen Ihrem persönlichen Konto und dem Teilnehmerkonto kommt und zusätzliche Gebühren für Ihr persönliches Konto erhoben werden.

- Zeit für die Durchführung des Labs – denken Sie daran, dass Sie ein begonnenes Lab nicht unterbrechen können.

Hinweis: Verwenden Sie für dieses Lab nur das Teilnehmerkonto. Wenn Sie ein anderes Google Cloud-Konto verwenden, fallen dafür möglicherweise Kosten an.

Lab starten und bei der Google Cloud Console anmelden

-

Klicken Sie auf Lab starten. Wenn Sie für das Lab bezahlen müssen, wird ein Dialogfeld geöffnet, in dem Sie Ihre Zahlungsmethode auswählen können.

Auf der linken Seite befindet sich der Bereich „Details zum Lab“ mit diesen Informationen:

- Schaltfläche „Google Cloud Console öffnen“

- Restzeit

- Temporäre Anmeldedaten für das Lab

- Ggf. weitere Informationen für dieses Lab

-

Klicken Sie auf Google Cloud Console öffnen (oder klicken Sie mit der rechten Maustaste und wählen Sie Link in Inkognitofenster öffnen aus, wenn Sie Chrome verwenden).

Im Lab werden Ressourcen aktiviert. Anschließend wird ein weiterer Tab mit der Seite „Anmelden“ geöffnet.

Tipp: Ordnen Sie die Tabs nebeneinander in separaten Fenstern an.

Hinweis: Wird das Dialogfeld Konto auswählen angezeigt, klicken Sie auf Anderes Konto verwenden.

-

Kopieren Sie bei Bedarf den folgenden Nutzernamen und fügen Sie ihn in das Dialogfeld Anmelden ein.

{{{user_0.username | "Username"}}}

Sie finden den Nutzernamen auch im Bereich „Details zum Lab“.

-

Klicken Sie auf Weiter.

-

Kopieren Sie das folgende Passwort und fügen Sie es in das Dialogfeld Willkommen ein.

{{{user_0.password | "Password"}}}

Sie finden das Passwort auch im Bereich „Details zum Lab“.

-

Klicken Sie auf Weiter.

Wichtig: Sie müssen die für das Lab bereitgestellten Anmeldedaten verwenden. Nutzen Sie nicht die Anmeldedaten Ihres Google Cloud-Kontos.

Hinweis: Wenn Sie Ihr eigenes Google Cloud-Konto für dieses Lab nutzen, können zusätzliche Kosten anfallen.

-

Klicken Sie sich durch die nachfolgenden Seiten:

- Akzeptieren Sie die Nutzungsbedingungen.

- Fügen Sie keine Wiederherstellungsoptionen oder Zwei-Faktor-Authentifizierung hinzu (da dies nur ein temporäres Konto ist).

- Melden Sie sich nicht für kostenlose Testversionen an.

Nach wenigen Augenblicken wird die Google Cloud Console in diesem Tab geöffnet.

Hinweis: Wenn Sie auf Google Cloud-Produkte und ‑Dienste zugreifen möchten, klicken Sie auf das Navigationsmenü oder geben Sie den Namen des Produkts oder Dienstes in das Feld Suchen ein.

Die BigQuery Console öffnen

- Klicken Sie in der Google Cloud Console im Navigationsmenü auf BigQuery.

Zuerst wird das Fenster Willkommen bei BigQuery in der Cloud Console geöffnet, das neben allgemeinen Informationen auch einen Link zur Kurzanleitung und zu den Versionshinweisen enthält.

- Klicken Sie auf Fertig.

Die BigQuery Console wird geöffnet.

Aufgabe 1: Ein neues Dataset zum Speichern der Tabellen erstellen

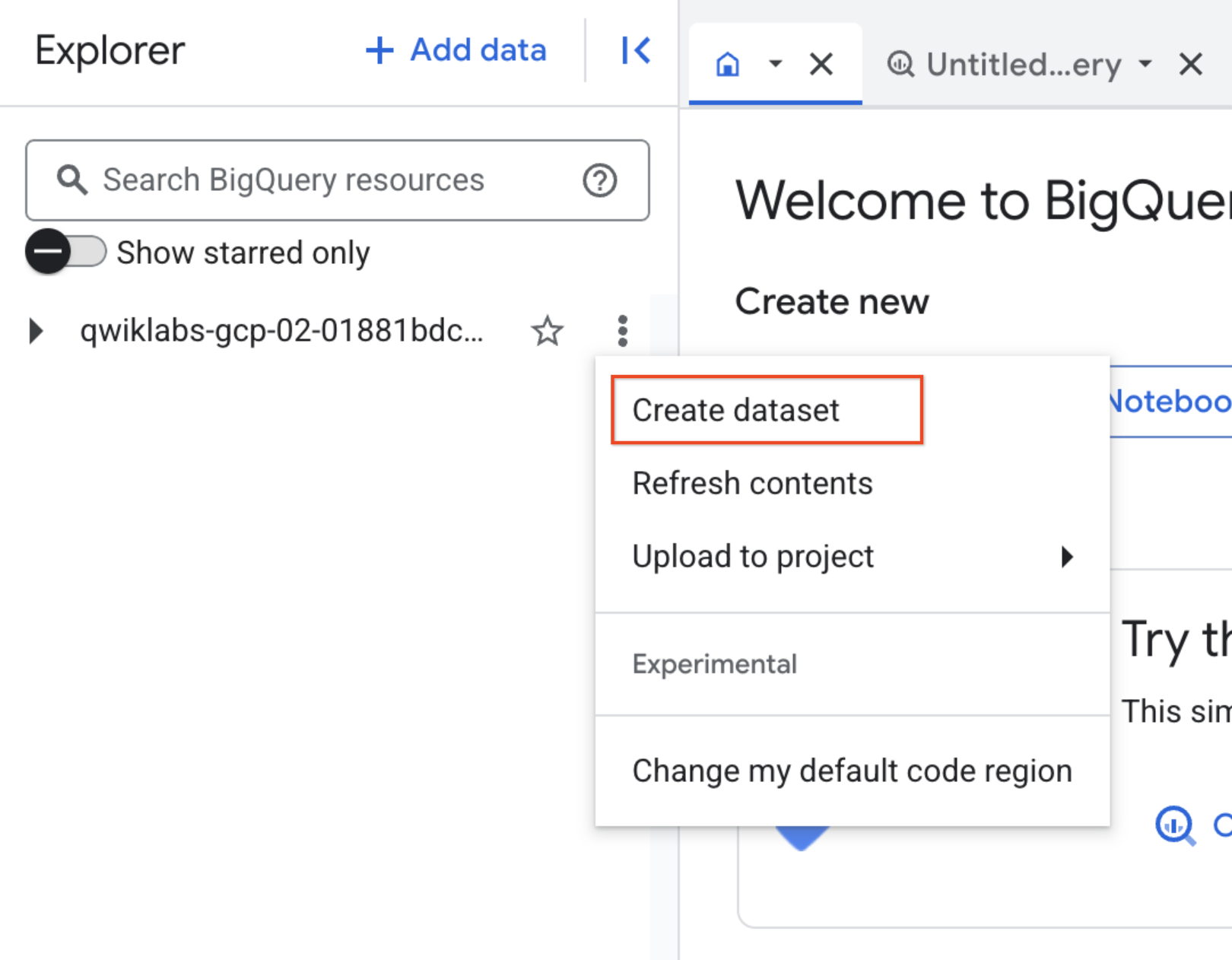

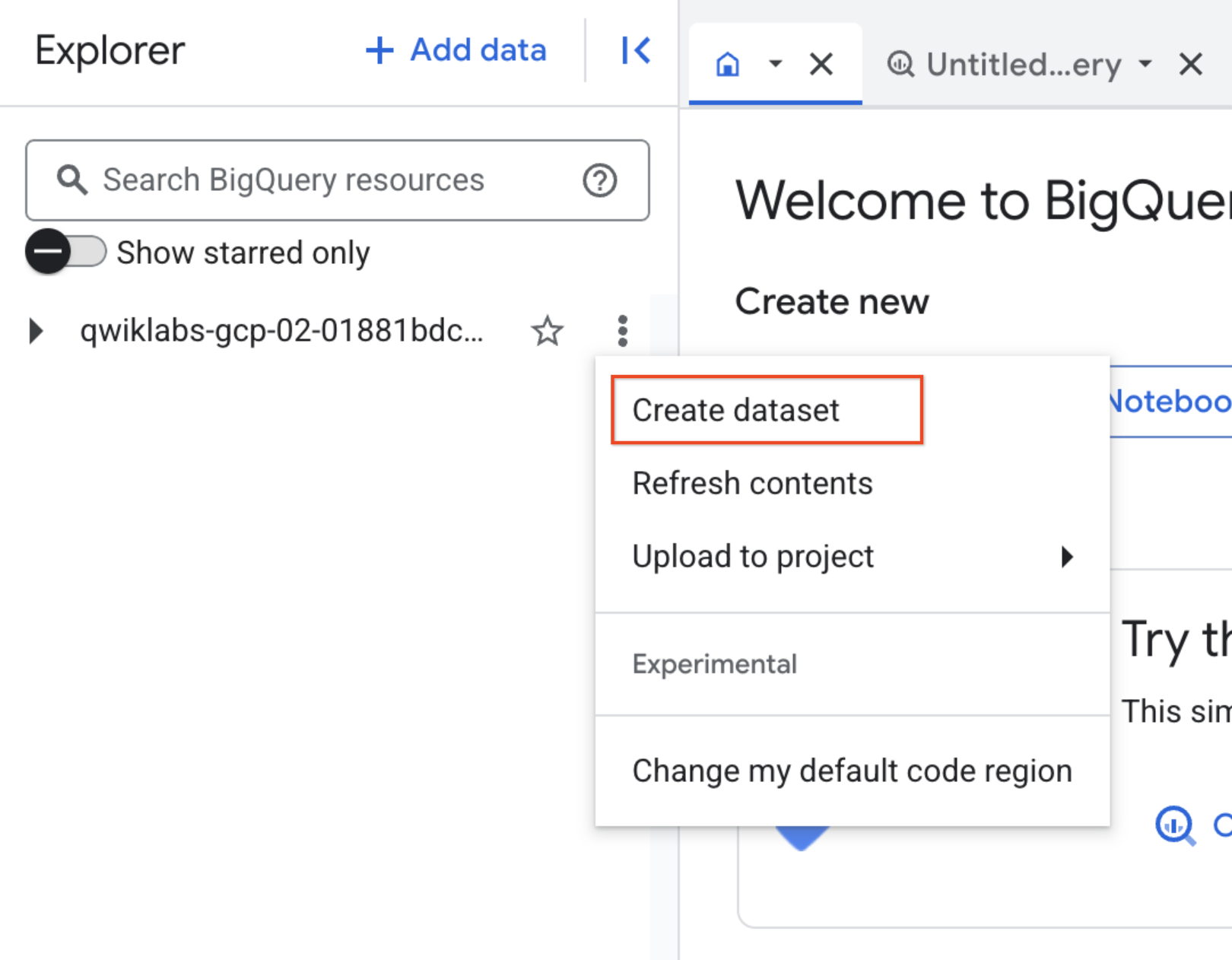

- Klicken Sie in BigQuery auf die drei Punkte neben der Projekt-ID und wählen Sie Dataset erstellen aus:

-

Legen Sie als Dataset-ID den Namen fruit_store fest. Für die restlichen Optionen können Sie die Standardwerte beibehalten (Speicherort der Daten, Standardablaufzeit).

-

Klicken Sie auf Dataset erstellen.

Aufgabe 2: Mit Arrays in SQL arbeiten

Normalerweise gibt es in SQL für jede Zeile einen einzigen Wert, wie z. B. in folgender Liste von Obstsorten:

|

Zeile

|

Obstsorte

|

|

1

|

Himbeere

|

|

2

|

Brombeere

|

|

3

|

Erdbeere

|

|

4

|

Kirsche

|

Was wäre aber, wenn Sie eine Liste von Obstsorten für jede Person im Geschäft erstellen möchten? Die Ausgabe könnte in diesem Fall ungefähr so aussehen:

|

Zeile

|

Obstsorte

|

Person

|

|

1

|

Himbeere

|

Sarah

|

|

2

|

Brombeere

|

Sarah

|

|

3

|

Erdbeere

|

Sarah

|

|

4

|

Kirsche

|

Sarah

|

|

5

|

Orange

|

Frederick

|

|

6

|

Apfel

|

Frederick

|

In einer traditionellen relationalen SQL-Datenbank würden Sie nach sich wiederholenden Namen suchen und die obige Tabelle in zwei separate Tabellen aufteilen, nämlich „Obstsorten“ und „Personen“. Das Konvertieren von einer in mehrere Tabellen nennt sich Normalisierung. Für transaktionale Datenbanken wie mySQL ist das eine gängige Methode.

Im Data-Warehouse-Prozess wird der Vorgang oft umgekehrt: Bei der Denormalisierung werden viele Einzeltabellen zu einer großen Berichtstabelle zusammengeführt.

Jetzt lernen Sie eine weitere Methode kennen, bei der Daten in verschiedenen Detaillierungsgraden mithilfe wiederkehrender Felder in einer Tabelle gespeichert werden:

|

Zeile

|

Obstsorte (Array)

|

Person

|

|

1

|

Himbeere

|

Sarah

|

|

Brombeere

|

|

Erdbeere

|

|

Kirsche

|

|

2

|

Orange

|

Frederick

|

|

Apfel

|

Was fällt Ihnen an der Tabelle auf?

- Sie hat nur zwei Zeilen.

- Es gibt mehrere Feldwerte für „Obstsorte" in einer Zeile.

- Die Personen sind mit allen Feldwerten verknüpft.

Es handelt sich hierbei um Besonderheiten des Datentyps Array.

So lässt sich das Obstsorten-Array leichter interpretieren:

|

Zeile

|

Obstsorte (Array)

|

Person

|

|

1

|

[Himbeere, Brombeere, Erdbeere, Kirsche]

|

Sarah

|

|

2

|

[Orange, Apfel]

|

Frederick

|

Beide Tabellen drücken exakt dasselbe aus. Daraus folgen zwei wichtige Erkenntnisse:

- Ein Array ist einfach eine Liste von Elementen in eckigen Klammern [ ].

- In BigQuery werden Arrays vereinfacht dargestellt. Die Werte im Array werden vertikal gelistet und gehören nach wie vor zu einer Zeile.

Sie können es selbst ausprobieren.

- Geben Sie dazu im BigQuery-Abfrageeditor Folgendes ein:

#standardSQL

SELECT

['raspberry', 'blackberry', 'strawberry', 'cherry'] AS fruit_array

-

Klicken Sie auf Ausführen.

-

Probieren Sie danach folgenden Befehl aus:

#standardSQL

SELECT

['raspberry', 'blackberry', 'strawberry', 'cherry', 1234567] AS fruit_array

Sie sollten jetzt eine Fehlermeldung erhalten, die so aussieht:

Error: Array elements of types {INT64, STRING} do not have a common supertype at [3:1]

Arrays basieren auf einem gemeinsamen Datentyp, z. B. nur Strings oder nur Zahlen.

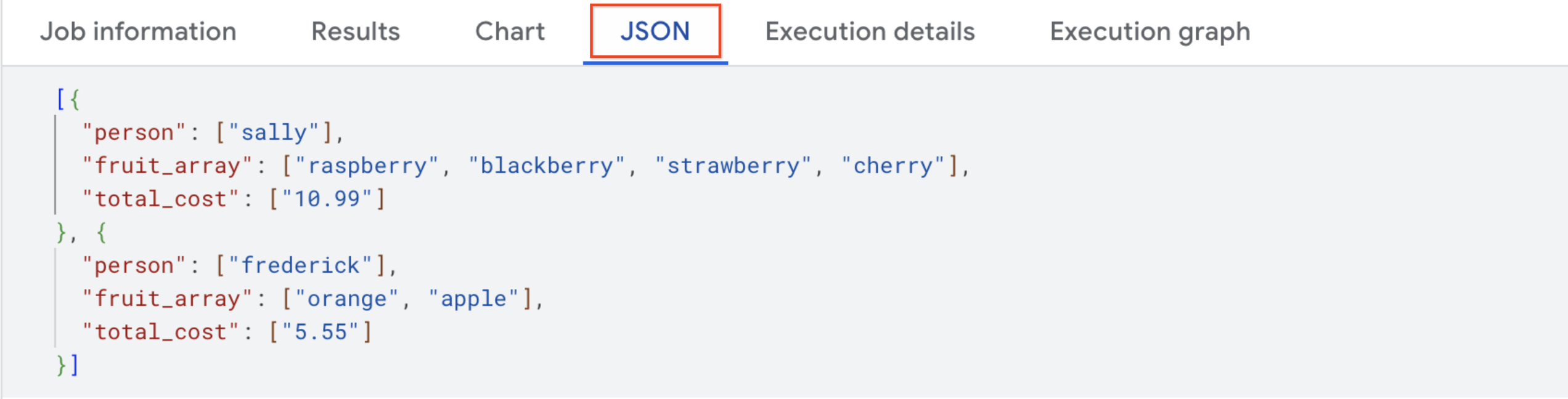

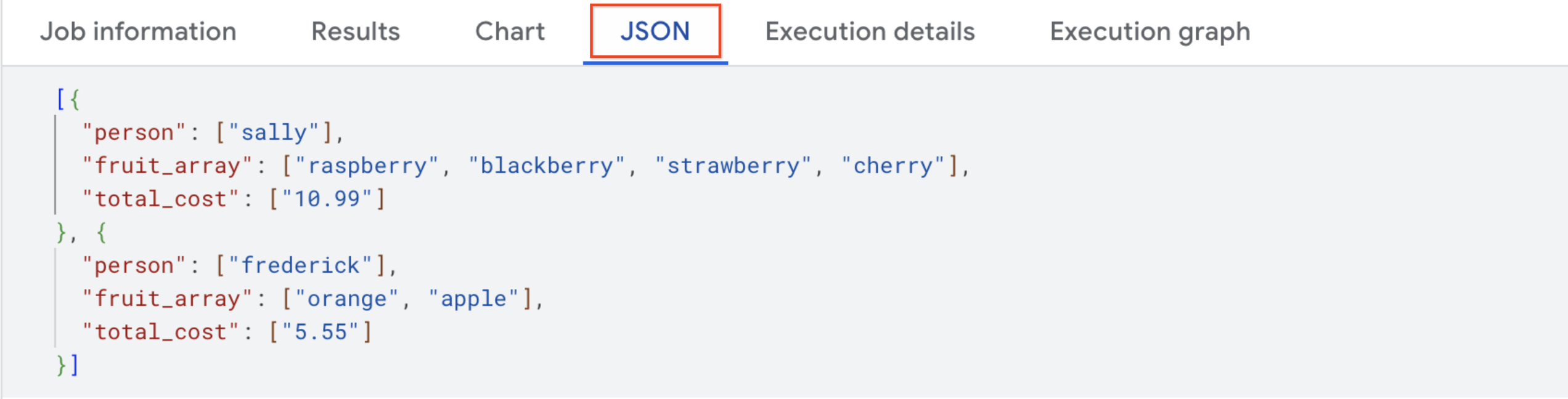

- Die endgültige Tabelle erhalten Sie mit folgender Abfrage:

#standardSQL

SELECT person, fruit_array, total_cost FROM `data-to-insights.advanced.fruit_store`;

-

Klicken Sie auf Ausführen.

-

Nachdem Sie sich die Ergebnisse angesehen haben, klicken Sie auf den Tab JSON, um die verschachtelte Struktur der Ergebnisse aufzurufen.

Semistrukturierte JSON-Daten in BigQuery laden

Angenommen, Sie haben eine JSON-Datei, die in BigQuery aufgenommen werden soll. Wie gehen Sie vor?

Erstellen Sie im Dataset eine neue Tabelle mit dem Namen fruit_details.

- Klicken Sie auf das Dataset

fruit_store.

Daraufhin wird die Option Tabelle erstellen angezeigt.

Hinweis: Möglicherweise müssen Sie das Browserfenster erweitern, um die Option „Tabelle erstellen“ zu sehen.

- Nehmen Sie dabei folgende Einstellungen vor:

-

Quelle: Wählen Sie im Drop-down Tabelle erstellen aus die Option Google Cloud Storage aus.

-

Wählen Sie die Datei aus dem Cloud Storage-Bucket aus:

cloud-training/data-insights-course/labs/optimizing-for-performance/shopping_cart.json

-

Dateiformat: JSONL (durch Zeilenumbruch getrenntes JSON)

-

Nennen Sie die neue Tabelle fruit_details.

-

Aktivieren Sie das Kästchen Schema (Automatisch erkennen).

-

Klicken Sie auf Tabelle erstellen.

Im Schema sehen Sie, dass fruit_array als REPEATED (wiederkehrend) markiert ist. Damit wird angezeigt, dass es sich um ein Array handelt.

Zusammenfassung

- In BigQuery werden Arrays nativ unterstützt.

- Alle Array-Werte müssen auf dem gleichen Datentyp basieren.

- Arrays werden in BigQuery REPEATED-Felder genannt.

Klicken Sie auf Fortschritt prüfen.

Neues Dataset und Tabelle erstellen, um die Daten zu speichern

Aufgabe 3: Eigene Arrays mit ARRAY_AGG() erstellen

Wenn Sie noch keine Arrays in Ihren Tabellen haben, können Sie sie erstellen.

- Untersuchen Sie dieses öffentliche Dataset durch Kopieren und Einfügen der folgenden Abfrage:

SELECT

fullVisitorId,

date,

v2ProductName,

pageTitle

FROM `data-to-insights.ecommerce.all_sessions`

WHERE visitId = 1501570398

ORDER BY date

- Klicken Sie auf Ausführen und sehen Sie sich die Ergebnisse an.

Verwenden Sie jetzt die Funktion ARRAY_AGG(), um unsere Stringwerte in einem Array zu aggregieren.

- Untersuchen Sie dieses öffentliche Dataset durch Kopieren und Einfügen der folgenden Abfrage:

SELECT

fullVisitorId,

date,

ARRAY_AGG(v2ProductName) AS products_viewed,

ARRAY_AGG(pageTitle) AS pages_viewed

FROM `data-to-insights.ecommerce.all_sessions`

WHERE visitId = 1501570398

GROUP BY fullVisitorId, date

ORDER BY date

- Klicken Sie auf Ausführen und sehen Sie sich die Ergebnisse an.

- Zählen Sie mit der Funktion

ARRAY_LENGTH() anschließend die Seiten und Produkte, die angesehen wurden:

SELECT

fullVisitorId,

date,

ARRAY_AGG(v2ProductName) AS products_viewed,

ARRAY_LENGTH(ARRAY_AGG(v2ProductName)) AS num_products_viewed,

ARRAY_AGG(pageTitle) AS pages_viewed,

ARRAY_LENGTH(ARRAY_AGG(pageTitle)) AS num_pages_viewed

FROM `data-to-insights.ecommerce.all_sessions`

WHERE visitId = 1501570398

GROUP BY fullVisitorId, date

ORDER BY date

- Jetzt deduplizieren Sie die Seiten und Produkte, um zu sehen, wie viele einzelne Produkte aufgerufen wurden. Fügen Sie dafür

DISTINCT zu ARRAY_AGG() hinzu:

SELECT

fullVisitorId,

date,

ARRAY_AGG(DISTINCT v2ProductName) AS products_viewed,

ARRAY_LENGTH(ARRAY_AGG(DISTINCT v2ProductName)) AS distinct_products_viewed,

ARRAY_AGG(DISTINCT pageTitle) AS pages_viewed,

ARRAY_LENGTH(ARRAY_AGG(DISTINCT pageTitle)) AS distinct_pages_viewed

FROM `data-to-insights.ecommerce.all_sessions`

WHERE visitId = 1501570398

GROUP BY fullVisitorId, date

ORDER BY date

Klicken Sie auf Fortschritt prüfen.

Abfrage ausführen, um die Anzahl der einzelnen aufgerufenen Produkte zu erhalten

Zusammenfassung

Mit Arrays lassen sich viele nützliche Dinge tun:

- Anzahl der Elemente finden:

ARRAY_LENGTH(<Array>)

- Elemente deduplizieren:

ARRAY_AGG(DISTINCT <field>)

- Elemente sortieren:

ARRAY_AGG(<Feld> ORDER BY <field>)

- Die Anzahl der Ergebnisse begrenzen:

ARRAY_AGG(<field> LIMIT 5)

Aufgabe 4: Abfragetabellen mit Arrays

Im öffentlichen BigQuery-Dataset für Google Analytics bigquery-public-data.google_analytics_sample sind viel mehr Felder und Zeilen vorhanden als im Dataset data-to-insights.ecommerce.all_sessions für dieses Lab. Noch interessanter ist jedoch, dass die Feldwerte wie Produkte, Seiten und Transaktionen bereits nativ als Arrays gespeichert sind.

- Untersuchen Sie die verfügbaren Daten durch Kopieren und Einfügen der folgenden Abfrage. Suchen Sie dabei nach Feldern mit wiederkehrenden Werten (Arrays):

SELECT

*

FROM `bigquery-public-data.google_analytics_sample.ga_sessions_20170801`

WHERE visitId = 1501570398

-

Klicken Sie auf Ausführen.

-

Scrollen Sie in den Ergebnissen nach rechts, bis Sie zum Feld hits.product.v2ProductName gelangen. Wir behandeln demnächst mehrere Feldaliasse.

Die Anzahl der Felder, die im Google Analytics-Schema zur Verfügung steht, kann die Analyse überlasten.

- Sie sollten daher nur die Felder „visit“ und „page name“ abfragen:

SELECT

visitId,

hits.page.pageTitle

FROM `bigquery-public-data.google_analytics_sample.ga_sessions_20170801`

WHERE visitId = 1501570398

Sie erhalten einen Fehler:

Fehler:Cannot access field page on a value with type ARRAY<STRUCT<hitNumber INT64, time INT64, hour INT64, ...>> at [3:8]

Bevor Sie REPEATED-Felder (Arrays) wie gewohnt abfragen können, müssen die Arrays wieder in Zeilen strukturiert werden.

Das Array für hits.page.pageTitle ist zurzeit als einzelne Zeile gespeichert:

['homepage','product page','checkout']

Es sollte aber so aussehen:

['homepage',

'product page',

'checkout']

Wie können Sie das mit SQL machen?

Antwort: Nutzen Sie dazu die Funktion UNNEST() in Ihrem Array-Feld:

SELECT DISTINCT

visitId,

h.page.pageTitle

FROM `bigquery-public-data.google_analytics_sample.ga_sessions_20170801`,

UNNEST(hits) AS h

WHERE visitId = 1501570398

LIMIT 10

Mehr zu UNNEST() später. Wichtig für den Moment ist Folgendes:

- Mit UNNEST() werden Array-Elemente wieder in Zeilen angeordnet.

- UNNEST() folgt immer auf den Tabellennamen in der FROM-Anweisung. Sie können sich das wie eine vorab verknüpfte Tabelle vorstellen.

Klicken Sie auf Fortschritt prüfen.

Abfrage ausführen, um UNNEST() auf das Array-Feld anzuwenden

Aufgabe 5: Einführung in STRUCTs

Sie fragen sich vielleicht, warum der Feldalias hit.page.pageTitle wie drei Felder aussieht, die jeweils durch Punkte getrennt sind. So wie Sie mit ARRAY-Werten tief in den Detaillierungsgrad der Felder eintauchen, können Sie mit einem anderen Datentyp durch Gruppierung verwandter Felder in die Breite des Schemas gehen. Dieser SQL-Datentyp heißt STRUCT.

Einen STRUCT kann man sich wie eine separate Tabelle vorstellen, die schon vorab mit Ihrer Haupttabelle verknüpft wurde.

Ein STRUCT zeichnet sich durch Folgendes aus:

- Es kann ein oder mehrere Felder haben.

- Es kann für jedes Feld dieselben oder unterschiedliche Datentypen haben.

- Es kann seinen eigenen Alias haben.

Klingt zunächst einmal wie eine ganz normale Tabelle.

Ein Dataset mit STRUCTs untersuchen

-

Zum Öffnen des Datasets bigquery-public-data klicken Sie auf +Daten hinzufügen. Wählen Sie dann Projekt nach Name markieren aus und geben Sie den Namen bigquery-public-data ein.

-

Klicken Sie auf Markieren.

Das Projekt bigquery-public-data wird im Abschnitt „Explorer“ aufgelistet.

-

Öffnen Sie bigquery-public-data.

-

Öffnen Sie darin das Dataset google_analytics_sample.

-

Klicken Sie auf die Tabelle ga_sessions_ (366).

-

Scrollen Sie durch das Schema und beantworten Sie die folgende Frage. Nutzen Sie dazu die Suchfunktion des Browsers.

Für moderne E-Commerce-Websites werden sehr viele Sitzungsdaten gespeichert.

Der eigentliche Vorteil von 32 STRUCTs in einer Tabelle ist, dass Sie Abfragen dieser Art ohne JOINs ausführen können:

SELECT

visitId,

totals.*,

device.*

FROM `bigquery-public-data.google_analytics_sample.ga_sessions_20170801`

WHERE visitId = 1501570398

LIMIT 10

Hinweis:: Mit der Syntax .* erhält BigQuery die Anweisung, alle Felder für diesen STRUCT zurückzugeben. Das wäre auch so, wenn totals.* eine separate Tabelle wäre, die Sie mit dieser zusammenführen.

Wenn Sie große Berichtstabellen als STRUCTs (vorab verknüpfte „Tabellen“) und ARRAYs (mit hohem Detaillierungsgrad) speichern, bringt das folgende Vorteile:

- Eine signifikant höhere Leistung, weil 32 Tabellen-JOINs vermieden werden.

- Detaillierte Daten von ARRAYs, wenn Sie sie brauchen. Wenn nicht, entstehen aber keine Nachteile, weil BigQuery jede Spalte einzeln auf der Festplatte speichert.

- Alle geschäftlichen Daten sind in einer Tabelle gespeichert. Sie müssen also nicht auf JOIN-Schlüssel achten oder überlegen, in welchen Tabellen die gewünschten Daten enthalten sind.

Aufgabe 6: Übung mit STRUCTs und ARRAYs

Das nächste Dataset enthält Rundenzeiten von Läufern. Jede Runde wird als „Split“ bezeichnet.

- Probieren Sie mit der folgenden Abfrage die STRUCT-Syntax aus. Sehen Sie sich dabei die verschiedenen Feldtypen innerhalb des STRUCT-Containers an:

#standardSQL

SELECT STRUCT("Rudisha" as name, 23.4 as split) as runner

|

Zeile

|

runner.name

|

runner.split

|

|

1

|

Rudisha

|

23.4

|

Was fällt Ihnen an den Feldaliassen auf? Da der STRUCT-Container verschachtelte Felder enthält ("name" und "split" sind Teilmengen von "runner"), wird eine Punktnotation verwendet.

Wie gehen Sie vor, wenn für einen Läufer mehrere Splits in einem Rennen vorhanden sind, wie etwa verschiedene Rundenzeiten?

In diesem Fall eignet sich ein Array.

- Führen Sie dazu die folgende Abfrage aus:

#standardSQL

SELECT STRUCT("Rudisha" as name, [23.4, 26.3, 26.4, 26.1] as splits) AS runner

|

Zeile

|

runner.name

|

runner.splits

|

|

1

|

Rudisha

|

23.4

|

|

26.3

|

|

26.4

|

|

26.1

|

Zusammenfassung:

- STRUCTs sind Container, die mehrere verschachtelte Feldnamen und Datentypen enthalten können.

- Arrays sind die Feldtypen innerhalb eines STRUCT-Containers, wie Sie oben im „splits“-Feld sehen können.

JSON-Daten aufnehmen

-

Erstellen Sie ein neues Dataset mit dem Namen racing.

-

Klicken Sie auf das Dataset racing und dann auf Tabelle erstellen.

Hinweis: Möglicherweise müssen Sie das Browserfenster erweitern, um die Option „Tabelle erstellen“ zu sehen.

-

Quelle: Wählen Sie im Drop-down Tabelle erstellen aus die Option Google Cloud Storage aus.

-

Wählen Sie die Datei aus dem Cloud Storage-Bucket aus:

cloud-training/data-insights-course/labs/optimizing-for-performance/race_results.json

-

Dateiformat: JSONL (durch Zeilenumbruch getrenntes JSON)

- Klicken Sie unter Schema auf den Schieberegler Als Text bearbeiten und fügen Sie folgenden Code hinzu:

[

{

"name": "race",

"type": "STRING",

"mode": "NULLABLE"

},

{

"name": "participants",

"type": "RECORD",

"mode": "REPEATED",

"fields": [

{

"name": "name",

"type": "STRING",

"mode": "NULLABLE"

},

{

"name": "splits",

"type": "FLOAT",

"mode": "REPEATED"

}

]

}

]

-

Nennen Sie die neue Tabelle race_results.

-

Klicken Sie auf Tabelle erstellen.

-

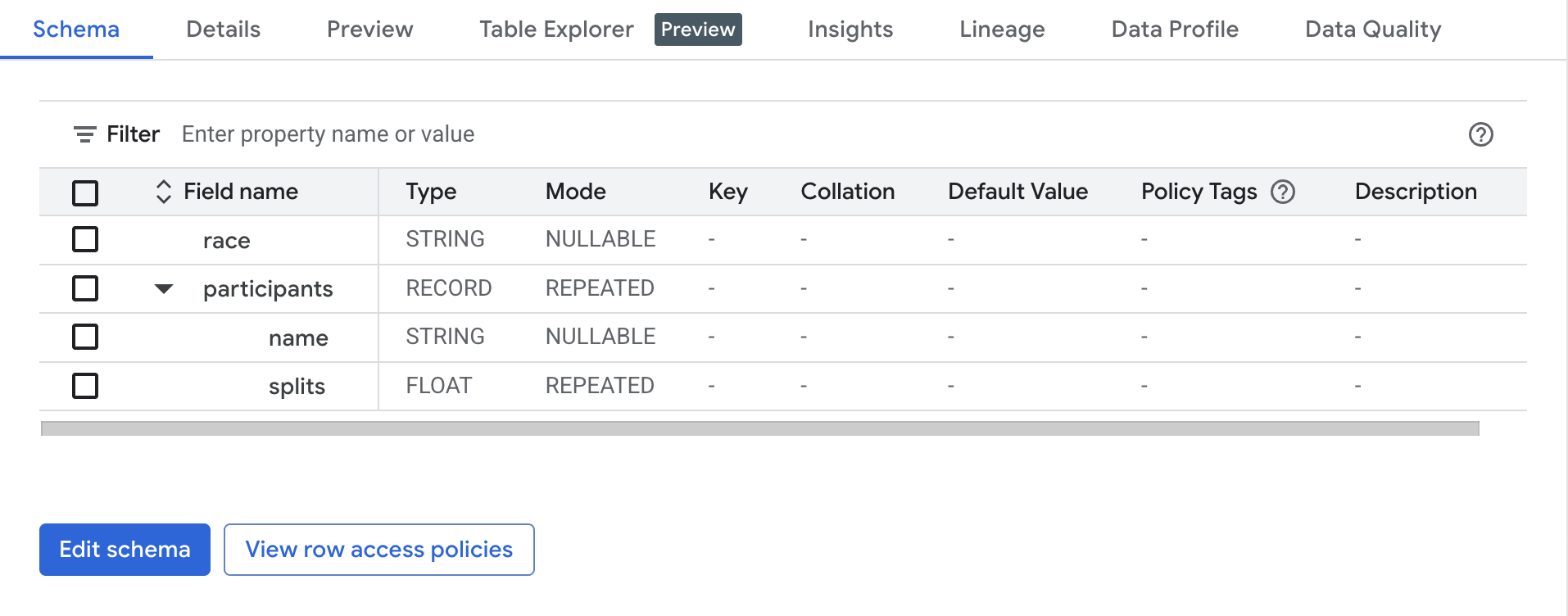

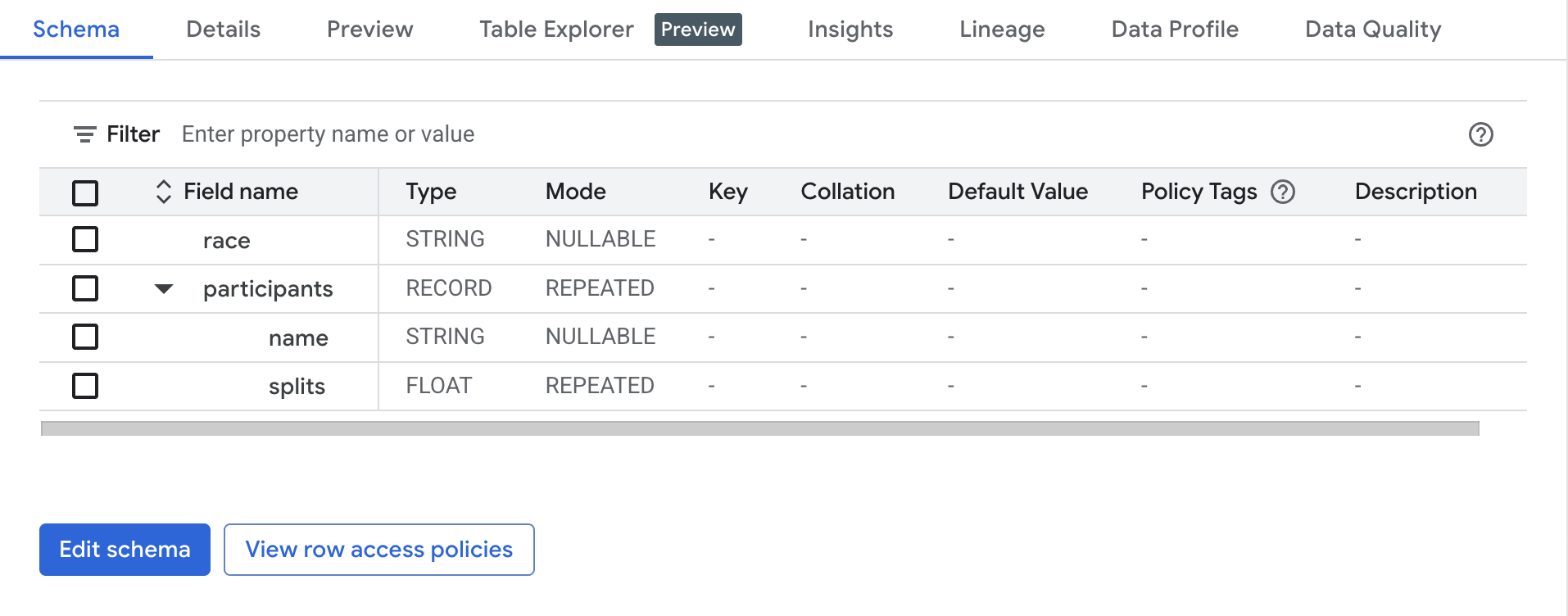

Lassen Sie sich nach dem erfolgreichen Ladevorgang eine Vorschau des Schemas für die neu erstellte Tabelle anzeigen:

Welches Feld enthält den STRUCT? Woran erkennen Sie das?

Das Feld participants ist der STRUCT, da es vom Typ RECORD ist.

Welches Feld enthält das ARRAY?

Das Feld participants.splits ist ein Array aus Gleitkommazahlen innerhalb des STRUCTs participants. Es hat den REPEATED-Modus, was auf einen Array hinweist. Werte aus diesem Array werden als verschachtelte Werte bezeichnet, da es sich um mehrere Werte in einem einzelnen Feld handelt.

Klicken Sie auf Fortschritt prüfen.

Dataset und Tabelle erstellen, um JSON-Daten aufzunehmen.

Verschachtelte und wiederkehrende Felder abfragen

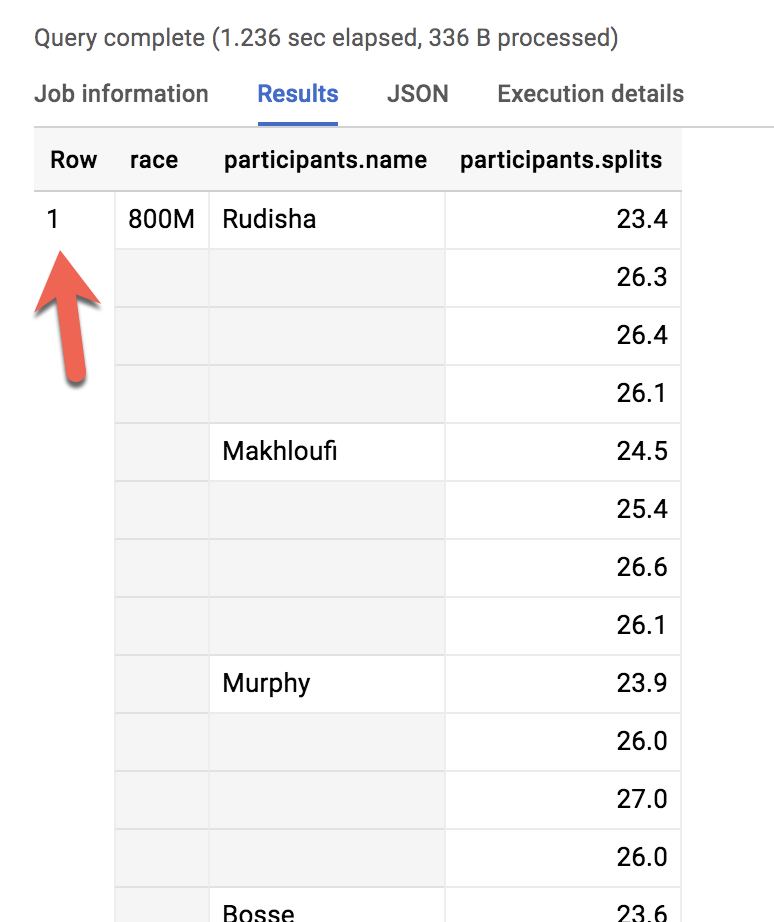

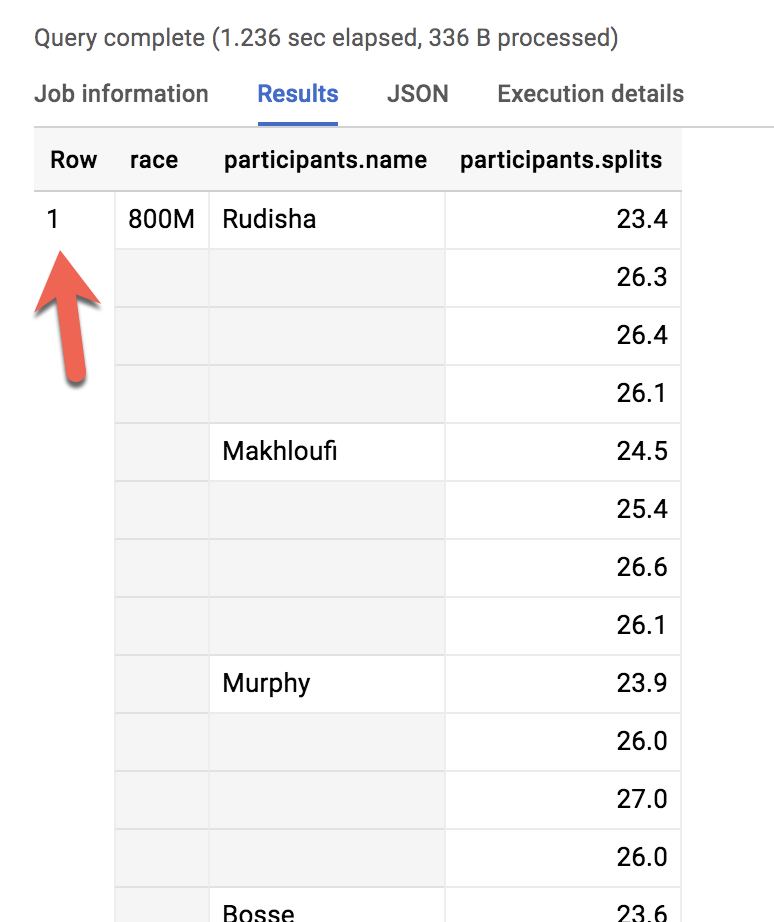

- Sehen wir uns alle Teilnehmenden des 800 Meter-Rennens an:

#standardSQL

SELECT * FROM racing.race_results

Wie viele Zeilen wurden zurückgegeben?

Antwort: 1

Wie können Sie den Namen aller Läufer und den Typ des Rennens auflisten?

- Führen Sie das folgende Schema aus und sehen Sie sich an, was passiert:

#standardSQL

SELECT race, participants.name

FROM racing.race_results

Fehler: Cannot access field name on a value with type ARRAY<STRUCT<name STRING, splits ARRAY<FLOAT64>>>> at [2:27]

Ähnlich wie beim Weglassen von GROUP BY bei Aggregationsfunktionen werden zwei verschiedene Detaillierungsgrade dargestellt: Eine Zeile für das Rennen und drei Zeilen für die Namen der Teilnehmer. Sie müssen also…

|

Zeile

|

race

|

participants.name

|

|

1

|

800 m

|

Rudisha

|

|

2

|

???

|

Makhloufi

|

|

3

|

???

|

Murphy

|

… in Folgendes ändern:

|

Zeile

|

race

|

participants.name

|

|

1

|

800 m

|

Rudisha

|

|

2

|

800 m

|

Makhloufi

|

|

3

|

800 m

|

Murphy

|

Wie würden Sie bei einer traditionellen relationalen SQL-Datenbank vorgehen, wenn Sie eine „races"-Tabelle und eine „participants"-Tabelle haben und Informationen aus beiden Tabellen erhalten möchten? Sie würden sie mit einem JOIN zusammenführen. Das „participant"-STRUCT ähnelt konzeptionell stark einer Tabelle und ist bereits Teil Ihrer „races"-Tabelle, korreliert jedoch nicht korrekt mit dem Nicht-STRUCT-Feld „race".

Fällt Ihnen ein SQL-Befehl aus zwei Wörtern ein, mit dem Sie das 800 Meter-Rennen mit den Läufern aus der ersten Tabelle korrelieren können?

Antwort: CROSS JOIN

Prima!

- Führen Sie jetzt Folgendes aus:

#standardSQL

SELECT race, participants.name

FROM racing.race_results

CROSS JOIN

participants # this is the STRUCT (it is like a table within a table)

Table name "participants" missing dataset while no default dataset is set in the request.

Obwohl der „participants“-STRUCT einer Tabelle ähnelt, ist er eigentlich immer noch ein Feld in der Tabelle racing.race_results.

- Fügen Sie der Abfrage den Dataset-Namen hinzu:

#standardSQL

SELECT race, participants.name

FROM racing.race_results

CROSS JOIN

race_results.participants # full STRUCT name

- Klicken Sie auf Ausführen.

Sehr gut. Sie haben erfolgreich alle Läufer für jedes Rennen aufgelistet.

|

Zeile

|

race

|

name

|

|

1

|

800 m

|

Rudisha

|

|

2

|

800 m

|

Makhloufi

|

|

3

|

800 m

|

Murphy

|

|

4

|

800 m

|

Bosse

|

|

5

|

800 m

|

Rotich

|

|

6

|

800 m

|

Lewandowski

|

|

7

|

800 m

|

Kipketer

|

|

8

|

800 m

|

Berian

|

- Die letzte Abfrage können Sie vereinfachen, indem Sie:

- einen Alias für die ursprüngliche Tabelle hinzufügen

- die Wörter „CROSS JOIN" durch ein Komma ersetzen (ein Komma impliziert einen Cross Join)

Sie erhalten dann das gleiche Abfrageergebnis:

#standardSQL

SELECT race, participants.name

FROM racing.race_results AS r, r.participants

Würde ein CROSS JOIN bei mehreren Renntypen (800 m, 100 m, 200 m) nicht einfach jeden Läufernamen als kartesisches Produkt mit jedem möglichen Renntypen verknüpfen?

Antwort: Nein. Es handlet sich hier um einen korrelierten Cross Join, mit dem nur die Elemente entpackt werden, die mit einer einzelnen Zeile verknüpft sind. Weitere Informationen finden Sie in der Dokumentation Mit Arrays arbeiten.

Zusammenfassung zu STRUCTs:

- Ein SQL-STRUCT ist einfach ein Container mit anderen Datenfeldern, die verschiedene Datentypen enthalten. Das Wort „STRUCT“ leitet sich von „Datenstruktur“ ab. Rufen Sie sich noch einmal das vorherige Beispiel ins Gedächtnis:

STRUCT(``"Rudisha" as name, [23.4, 26.3, 26.4, 26.1] as splits``)`` AS runner

- STRUCTs erhalten einen Alias, z. B. „runner“, und man kann sie sich als Tabelle in einer Haupttabelle vorstellen.

- STRUCTs und ARRAYs müssen entpackt werden, bevor Sie mit ihren Elementen arbeiten können. Umschließen Sie den Namen des STRUCTs oder das als Array fungierende STRUCT-Feld mit einem UNNEST()-Befehl, um den STRUCT zu entpacken und vereinfachen.

Aufgabe 7: Lab-Frage: STRUCT()

Beantworten Sie die Fragen unten anhand der Tabelle racing.race_results, die Sie bereits erstellt haben.

Aufgabe: Führen Sie eine COUNT-Abfrage aus, um zu ermitteln, wie viele Läufer insgesamt am Rennen teilgenommen haben.

- Als Hilfe können Sie die folgende unvollständige Abfrage verwenden:

#standardSQL

SELECT COUNT(participants.name) AS racer_count

FROM racing.race_results

Hinweis: Denken Sie daran, per Cross Join-Abfrage im Struct-Namen hinter der FROM-Anweisung eine zusätzliche Datenquelle anzugeben.

Mögliche Lösung:

#standardSQL

SELECT COUNT(p.name) AS racer_count

FROM racing.race_results AS r, UNNEST(r.participants) AS p

Antwort: Am Rennen haben 8 Läufer teilgenommen.

Klicken Sie auf Fortschritt prüfen.

Abfrage mit COUNT ausführen, um die Gesamtzahl der Läufer zu ermitteln

Aufgabe 8: Lab-Frage: Arrays mit UNNEST() entpacken

Schreiben Sie eine Abfrage, mit der die Laufzeit der Teilnehmenden aufgelistet wird, deren Name mit R beginnt. Ordnen Sie die Liste nach der Gesamtzeit und beginnen Sie mit der schnellsten Zeit. Verwenden Sie den Operator UNNEST() in der folgenden unvollständigen Abfrage.

- Vervollständigen Sie die Abfrage:

#standardSQL

SELECT

p.name,

SUM(split_times) as total_race_time

FROM racing.race_results AS r

, r.participants AS p

, p.splits AS split_times

WHERE

GROUP BY

ORDER BY

;

Hinweis:

- Sie müssen sowohl den STRUCT als auch das Array innerhalb des STRUCTs als Datenquellen nach der FROM-Anweisung entpacken.

- Verwenden Sie ggf. Aliasse.

Mögliche Lösung:

#standardSQL

SELECT

p.name,

SUM(split_times) as total_race_time

FROM racing.race_results AS r

, UNNEST(r.participants) AS p

, UNNEST(p.splits) AS split_times

WHERE p.name LIKE 'R%'

GROUP BY p.name

ORDER BY total_race_time ASC;

|

Zeile

|

name

|

total_race_time

|

|

1

|

Rudisha

|

102.19999999999999

|

|

2

|

Rotich

|

103.6

|

Klicken Sie auf Fortschritt prüfen.

Abfrage ausführen, um die Laufzeit von Teilnehmenden aufzulisten, deren Name mit R beginnt

Aufgabe 9: Innerhalb von Array-Werten filtern

Sie haben gesehen, dass die schnellste Rundenzeit, die für das 800-Meter-Rennen aufgezeichnet wurde, 23,2 Sekunden betrug. Sie haben jedoch nicht herausgefunden, welcher Läufer diese Runde gelaufen ist. Erstellen Sie eine Abfrage, mit der das ausgegeben wird.

- Vervollständigen Sie die Abfrage:

#standardSQL

SELECT

p.name,

split_time

FROM racing.race_results AS r

, r.participants AS p

, p.splits AS split_time

WHERE split_time = ;

Mögliche Lösung:

#standardSQL

SELECT

p.name,

split_time

FROM racing.race_results AS r

, UNNEST(r.participants) AS p

, UNNEST(p.splits) AS split_time

WHERE split_time = 23.2;

|

Zeile

|

name

|

split_time

|

|

1

|

Kipketer

|

23.2

|

Klicken Sie auf Fortschritt prüfen.

Führen Sie die Abfrage aus, um herauszufinden, welcher Läufer die kürzeste Rundenzeit hatte.

Das war's!

Sie haben erfolgreich JSON-Datasets aufgenommen, ARRAYs und STRUCTs erstellt und die Verschachtelung semistrukturierter Daten aufgehoben, um neue Informationen abzurufen.

Weitere Informationen

- Weitere Informationen erhalten Sie in der Dokumentation Mit Arrays arbeiten.

- Labs zum Vertiefen Ihres Wissens:

Google Cloud-Schulungen und -Zertifizierungen

In unseren Schulungen erfahren Sie alles zum optimalen Einsatz unserer Google Cloud-Technologien und können sich entsprechend zertifizieren lassen. Unsere Kurse vermitteln technische Fähigkeiten und Best Practices, damit Sie möglichst schnell mit Google Cloud loslegen und Ihr Wissen fortlaufend erweitern können. Wir bieten On-Demand-, Präsenz- und virtuelle Schulungen für Anfänger wie Fortgeschrittene an, die Sie individuell in Ihrem eigenen Zeitplan absolvieren können. Mit unseren Zertifizierungen weisen Sie nach, dass Sie Experte im Bereich Google Cloud-Technologien sind.

Anleitung zuletzt am 08. Mai 2025 aktualisiert

Lab zuletzt am 08. Mai 2025 getestet

© 2025 Google LLC. Alle Rechte vorbehalten. Google und das Google-Logo sind Marken von Google LLC. Alle anderen Unternehmens- und Produktnamen können Marken der jeweils mit ihnen verbundenen Unternehmen sein.