Checkpoints

Disable and re-enable the Dataflow API

/ 10

Create a Cloud Storage Bucket

/ 10

Copy Files to Your Bucket

/ 10

Create the BigQuery Dataset (name: lake)

/ 20

Build a Data Ingestion Dataflow Pipeline

/ 10

Build a Data Transformation Dataflow Pipeline

/ 10

Build a Data Enrichment Dataflow Pipeline

/ 10

Build a Data lake to Mart Dataflow Pipeline

/ 20

ETL Processing on Google Cloud Using Dataflow and BigQuery (Python)

- GSP290

- Overview

- Setup and requirements

- Task 1. Ensure that the Dataflow API is successfully enabled

- Task 2. Download the starter code

- Task 3. Create a Cloud Storage bucket

- Task 4. Copy files to your bucket

- Task 5. Create a BigQuery dataset

- Task 6. Build a Dataflow pipeline

- Task 7. Data ingestion with a Dataflow Pipeline

- Task 8. Review pipeline Python code

- Task 9. Run the Apache Beam pipeline

- Task 10. Data transformation

- Task 11. Run the Dataflow transformation pipeline

- Task 12. Data enrichment

- Task 13. Review data enrichment pipeline ython code

- Task 14. Run the Data Enrichment Dataflow pipeline

- Task 15. Data lake to Mart and Review pipeline python code

- Task 16. Run the Apache Beam Pipeline to perform the Data Join and create the resulting table in BigQuery

- Test your understanding

- Congratulations!

GSP290

Overview

In Google Cloud, you can build data pipelines that execute Python code to ingest and transform data from publicly available datasets into BigQuery using these Google Cloud services:

- Cloud Storage

- Dataflow

- BigQuery

In this lab, you use these services to create your own data pipeline, including the design considerations and implementation details, to ensure that your prototype meets the requirements. Be sure to open the Python files and read the comments when instructed.

What you'll do

In this lab, you learn how to:

- Build and run Dataflow pipelines (Python) for data ingestion

- Build and run Dataflow pipelines (Python) for data transformation and enrichment

Setup and requirements

Before you click the Start Lab button

Read these instructions. Labs are timed and you cannot pause them. The timer, which starts when you click Start Lab, shows how long Google Cloud resources will be made available to you.

This hands-on lab lets you do the lab activities yourself in a real cloud environment, not in a simulation or demo environment. It does so by giving you new, temporary credentials that you use to sign in and access Google Cloud for the duration of the lab.

To complete this lab, you need:

- Access to a standard internet browser (Chrome browser recommended).

- Time to complete the lab---remember, once you start, you cannot pause a lab.

How to start your lab and sign in to the Google Cloud console

-

Click the Start Lab button. If you need to pay for the lab, a pop-up opens for you to select your payment method. On the left is the Lab Details panel with the following:

- The Open Google Cloud console button

- Time remaining

- The temporary credentials that you must use for this lab

- Other information, if needed, to step through this lab

-

Click Open Google Cloud console (or right-click and select Open Link in Incognito Window if you are running the Chrome browser).

The lab spins up resources, and then opens another tab that shows the Sign in page.

Tip: Arrange the tabs in separate windows, side-by-side.

Note: If you see the Choose an account dialog, click Use Another Account. -

If necessary, copy the Username below and paste it into the Sign in dialog.

{{{user_0.username | "Username"}}} You can also find the Username in the Lab Details panel.

-

Click Next.

-

Copy the Password below and paste it into the Welcome dialog.

{{{user_0.password | "Password"}}} You can also find the Password in the Lab Details panel.

-

Click Next.

Important: You must use the credentials the lab provides you. Do not use your Google Cloud account credentials. Note: Using your own Google Cloud account for this lab may incur extra charges. -

Click through the subsequent pages:

- Accept the terms and conditions.

- Do not add recovery options or two-factor authentication (because this is a temporary account).

- Do not sign up for free trials.

After a few moments, the Google Cloud console opens in this tab.

Activate Cloud Shell

Cloud Shell is a virtual machine that is loaded with development tools. It offers a persistent 5GB home directory and runs on the Google Cloud. Cloud Shell provides command-line access to your Google Cloud resources.

- Click Activate Cloud Shell

at the top of the Google Cloud console.

When you are connected, you are already authenticated, and the project is set to your Project_ID,

gcloud is the command-line tool for Google Cloud. It comes pre-installed on Cloud Shell and supports tab-completion.

- (Optional) You can list the active account name with this command:

- Click Authorize.

Output:

- (Optional) You can list the project ID with this command:

Output:

gcloud, in Google Cloud, refer to the gcloud CLI overview guide.

Task 1. Ensure that the Dataflow API is successfully enabled

To ensure access to the necessary API, restart the connection to the Dataflow API.

-

In the Cloud Console, enter "Dataflow API" in the top search bar. Click on the result for Dataflow API.

-

Click Manage.

-

Click Disable API.

If asked to confirm, click Disable.

- Click Enable.

When the API has been enabled again, the page will show the option to disable.

Test completed task

Click Check my progress to verify your performed task.

Task 2. Download the starter code

- Run the following command in the Cloud Shell to get Dataflow Python Examples from Google Cloud's professional services GitHub:

- Now, in Cloud Shell, set a variable equal to your project id.

Task 3. Create a Cloud Storage bucket

- Use the make bucket command in the Cloud Shell to create a new regional bucket in the

region within your project:

Test completed task

Click Check my progress to verify your performed task.

Task 4. Copy files to your bucket

- Use the

gsutilcommand in the Cloud Shell to copy files into the Cloud Storage bucket you just created:

Test completed task

Click Check my progress to verify your performed task.

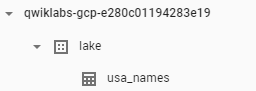

Task 5. Create a BigQuery dataset

- In the Cloud Shell, create a dataset in BigQuery Dataset called

lake. This is where all of your tables will be loaded in BigQuery:

Test completed task

Click Check my progress to verify your performed task.

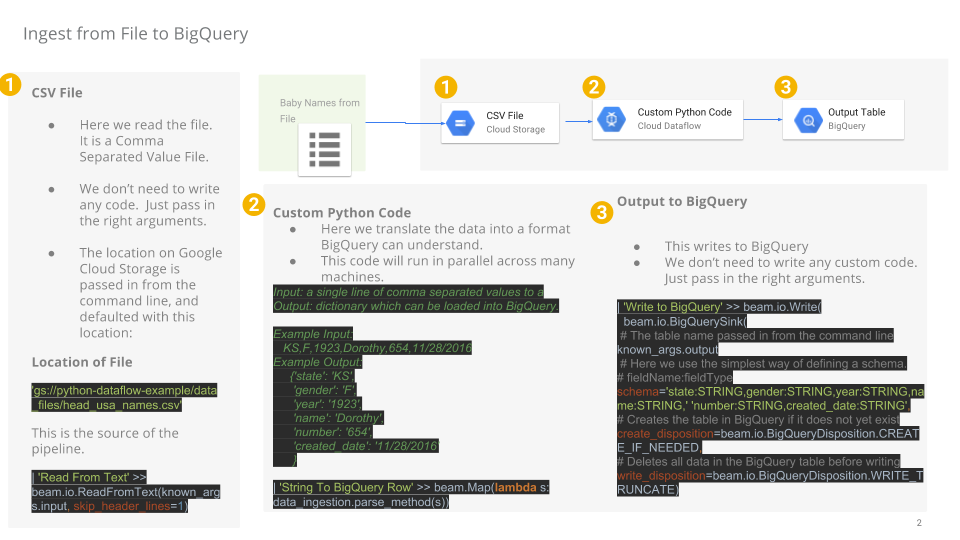

Task 6. Build a Dataflow pipeline

In this section you will create an append-only Dataflow which will ingest data into the BigQuery table. You can use the built-in code editor which will allow you to view and edit the code in the Google Cloud console.

Open the Cloud Shell Code Editor

- Navigate to the source code by clicking on the Open Editor icon in the Cloud Shell:

- If prompted, click on Open in a New Window. It will open the code editor in new window. The Cloud Shell Editor allows you to edit files in the Cloud Shell environment, from the Editor you can return to the Cloud Shell by Clicking on Open Terminal.

Task 7. Data ingestion with a Dataflow Pipeline

You will now build a Dataflow pipeline with a TextIO source and a BigQueryIO destination to ingest data into BigQuery. More specifically, it will:

- Ingest the files from Cloud Storage.

- Filter out the header row in the files.

- Convert the lines read to dictionary objects.

- Output the rows to BigQuery.

Task 8. Review pipeline Python code

In the Code Editor navigate to dataflow-python-examples > dataflow_python_examples and open the data_ingestion.py file. Read through the comments in the file, which explain what the code is doing. This code will populate the dataset lake with a table in BigQuery.

Task 9. Run the Apache Beam pipeline

- Return to your Cloud Shell session for this step. You will now do a bit of set up for the required python libraries.

The Dataflow job in this lab requires Python3.8. To ensure you're on the proper version, you will run the Dataflow processes in a Python 3.8 Docker container.

- Run the following in Cloud Shell to start up a Python Container:

This command will pull a Docker container with the latest stable version of Python 3.8 and execute a command shell to run the next commands within the container. The -v flag provides the source code as a volume for the container so that we can edit in Cloud Shell editor and still access it within the running container.

- Once the container finishes pulling, and starts executing in the Cloud Shell, run the following to install

apache-beamin that running container:

- Next, in the running container in the Cloud Shell, change directories into where you linked the source code:

Run the ingestion Dataflow pipeline in the cloud

- The following will spin up the workers required, and shut them down when complete:

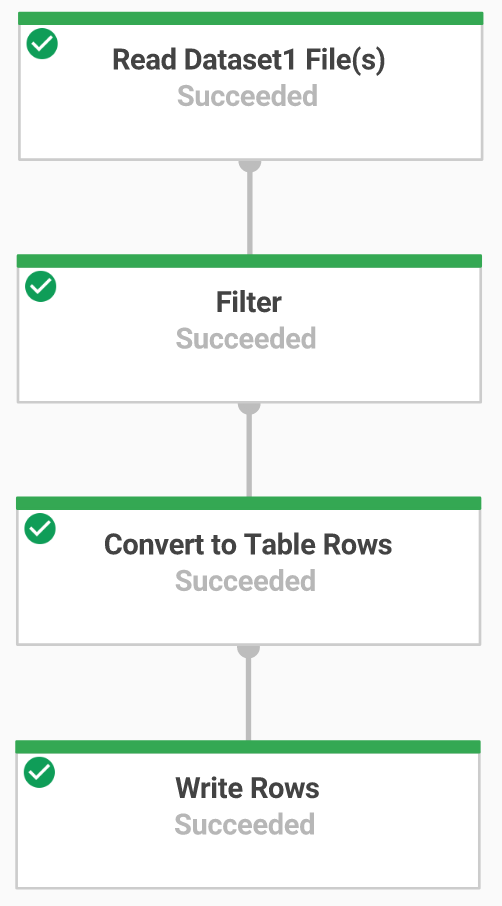

- Return to the Cloud Console and open the Navigation menu > Dataflow to view the status of your job.

-

Click on the name of your job to watch it's progress. Once your Job Status is Succeeded, you can move to the next step. This Dataflow pipeline will take approximately five minutes to start, complete the work, and then shutdown.

-

Navigate to BigQuery (Navigation menu > BigQuery) see that your data has been populated.

- Click on your project name to see the usa_names table under the

lakedataset.

- Click on the table then navigate to the Preview tab to see examples of the

usa_namesdata.

usa_names table, try refreshing the page or view the tables using the classic BigQuery UI.

Test completed task

Click Check my progress to verify your performed task.

Task 10. Data transformation

You will now build a Dataflow pipeline with a TextIO source and a BigQueryIO destination to ingest data into BigQuery. More specifically, you will:

- Ingest the files from Cloud Storage.

- Convert the lines read to dictionary objects.

- Transform the data which contains the year to a format BigQuery understands as a date.

- Output the rows to BigQuery.

Review transformation pipeline python code

In the Code Editor, open data_transformation.py file. Read through the comments in the file which explain what the code is doing.

Task 11. Run the Dataflow transformation pipeline

You will run the Dataflow pipeline in the cloud. This will spin up the workers required, and shut them down when complete.

- Run the following commands to do so:

-

Navigate to Navigation menu > Dataflow and click on the name of this job to view the status of your job. This Dataflow pipeline will take approximately five minutes to start, complete the work, and then shutdown.

-

When your Job Status is Succeeded in the Dataflow Job Status screen, navigate to BigQuery to check to see that your data has been populated.

-

You should see the usa_names_transformed table under the

lakedataset. -

Click on the table and navigate to the Preview tab to see examples of the

usa_names_transformeddata.

usa_names_transformed table, try refreshing the page or view the tables using the classic BigQuery UI.

Test completed task

Click Check my progress to verify your performed task.

Task 12. Data enrichment

You will now build a Dataflow pipeline with a TextIO source and a BigQueryIO destination to ingest data into BigQuery. More specifically, you will:

- Ingest the files from Cloud Storage.

- Filter out the header row in the files.

- Convert the lines read to dictionary objects.

- Output the rows to BigQuery.

Task 13. Review data enrichment pipeline ython code

-

In the Code Editor, open

data_enrichment.pyfile. -

Check out the comments which explain what the code is doing. This code will populate the data in BigQuery.

Line 83 currently looks like:

- Edit it to look like the following:

- When you have finished editing this line, remember to Save this updated file by selecting the File pull down in the Editor and clicking on Save

Task 14. Run the Data Enrichment Dataflow pipeline

Here you'll run the Dataflow pipeline in the cloud.

- Run the following in the Cloud Shell to spin up the workers required, and shut them down when complete:

-

Navigate to Navigation menu > Dataflow to view the status of your job. This Dataflow pipeline will take approximately five minutes to start, complete the work, and then shutdown.

-

Once your Job Status is Succeed in the Dataflow Job Status screen, navigate to BigQuery to check to see that your data has been populated.

You should see the usa_names_enriched table under the lake dataset.

- Click on the table and navigate to the Preview tab to see examples of the

usa_names_enricheddata.

usa_names_enriched table, try refreshing the page or view the tables using the classic BigQuery UI.

Test the completed data enrichment task

Click Check my progress to verify your performed task.

Task 15. Data lake to Mart and Review pipeline python code

Now build a Dataflow pipeline that reads data from two BigQuery data sources, and then joins the data sources. Specifically, you:

- Ingest files from two BigQuery sources.

- Join the two data sources.

- Filter out the header row in the files.

- Convert the lines read to dictionary objects.

- Output the rows to BigQuery.

In the Code Editor, open data_lake_to_mart.py file. Read through the comments in the file which explain what the code is doing. This code will join two tables and populate the resulting data in BigQuery.

Task 16. Run the Apache Beam Pipeline to perform the Data Join and create the resulting table in BigQuery

Now run the Dataflow pipeline in the cloud.

- Run the following code block, in the Cloud Shell, to spin up the workers required, and shut them down when complete:

-

Navigate to Navigation menu > Dataflow and click on the name of this new job to view the status. This Dataflow pipeline will take approximately five minutes to start, complete the work, and then shutdown.

-

Once your Job Status is Succeeded in the Dataflow Job Status screen, navigate to BigQuery to check to see that your data has been populated.

You should see the orders_denormalized_sideinput table under the lake dataset.

- Click on the table and navigate to the Preview section to see examples of

orders_denormalized_sideinputdata.

orders_denormalized_sideinput table, try refreshing the page or view the tables using the classic BigQuery UI.

Test completed JOIN task

Click Check my progress to verify your performed task.

Test your understanding

Below are multiple choice questions to reinforce your understanding of this lab's concepts. Answer them to the best of your abilities.

Congratulations!

You executed Python code using Dataflow to ingest data into BigQuery and transform the data.

Next steps / learn more

Looking for more? Check out official documentation on:

- Dataflow

- BigQuery

- Review the Apache Beam Programming Guide for more advanced concepts.

- Check out these labs:

Google Cloud training and certification

...helps you make the most of Google Cloud technologies. Our classes include technical skills and best practices to help you get up to speed quickly and continue your learning journey. We offer fundamental to advanced level training, with on-demand, live, and virtual options to suit your busy schedule. Certifications help you validate and prove your skill and expertise in Google Cloud technologies.

Manual Last Updated February 11, 2024

Lab Last Tested October 12, 2023

Copyright 2024 Google LLC All rights reserved. Google and the Google logo are trademarks of Google LLC. All other company and product names may be trademarks of the respective companies with which they are associated.