Checkpoint

Build with Cloud Build

/ 25

Deploy to Cloud Run

/ 25

Service Account created

/ 25

Check PubSub Subscription

/ 25

Creating PDFs with Go and Cloud Run

- GSP762

- Overview

- Objectives

- Setup and requirements

- Architecture

- Using Google APIs

- Task 1. Get the source code

- Task 2. Creating an invoice microservice

- Task 3. Create a pdf-conversion service

- Task 4. Create a Service Account

- Task 5. Testing the Cloud Run service

- Task 6. Cloud Storage trigger

- Task 7. Testing Cloud Storage notification

- Congratulations!

GSP762

Overview

In this lab you will build a PDF converter web app on Cloud Run, which is a serverless service, that automatically converts files stored in Google Drive into PDFs stored in segregated Google Drive folders.

Objectives

In this lab, you will:

- Convert a Go application to a container

- Learn how to build containers with Google Cloud Build

- Create a Cloud Run service that converts files to PDF files in the cloud.

- Understand how to create Service Accounts and add permissions

- Use event processing with Cloud Storage

Setup and requirements

Before you click the Start Lab button

Read these instructions. Labs are timed and you cannot pause them. The timer, which starts when you click Start Lab, shows how long Google Cloud resources will be made available to you.

This hands-on lab lets you do the lab activities yourself in a real cloud environment, not in a simulation or demo environment. It does so by giving you new, temporary credentials that you use to sign in and access Google Cloud for the duration of the lab.

To complete this lab, you need:

- Access to a standard internet browser (Chrome browser recommended).

- Time to complete the lab---remember, once you start, you cannot pause a lab.

How to start your lab and sign in to the Google Cloud console

-

Click the Start Lab button. If you need to pay for the lab, a pop-up opens for you to select your payment method. On the left is the Lab Details panel with the following:

- The Open Google Cloud console button

- Time remaining

- The temporary credentials that you must use for this lab

- Other information, if needed, to step through this lab

-

Click Open Google Cloud console (or right-click and select Open Link in Incognito Window if you are running the Chrome browser).

The lab spins up resources, and then opens another tab that shows the Sign in page.

Tip: Arrange the tabs in separate windows, side-by-side.

Note: If you see the Choose an account dialog, click Use Another Account. -

If necessary, copy the Username below and paste it into the Sign in dialog.

{{{user_0.username | "Username"}}} You can also find the Username in the Lab Details panel.

-

Click Next.

-

Copy the Password below and paste it into the Welcome dialog.

{{{user_0.password | "Password"}}} You can also find the Password in the Lab Details panel.

-

Click Next.

Important: You must use the credentials the lab provides you. Do not use your Google Cloud account credentials. Note: Using your own Google Cloud account for this lab may incur extra charges. -

Click through the subsequent pages:

- Accept the terms and conditions.

- Do not add recovery options or two-factor authentication (because this is a temporary account).

- Do not sign up for free trials.

After a few moments, the Google Cloud console opens in this tab.

Activate Cloud Shell

Cloud Shell is a virtual machine that is loaded with development tools. It offers a persistent 5GB home directory and runs on the Google Cloud. Cloud Shell provides command-line access to your Google Cloud resources.

- Click Activate Cloud Shell

at the top of the Google Cloud console.

When you are connected, you are already authenticated, and the project is set to your Project_ID,

gcloud is the command-line tool for Google Cloud. It comes pre-installed on Cloud Shell and supports tab-completion.

- (Optional) You can list the active account name with this command:

- Click Authorize.

Output:

- (Optional) You can list the project ID with this command:

Output:

gcloud, in Google Cloud, refer to the gcloud CLI overview guide.

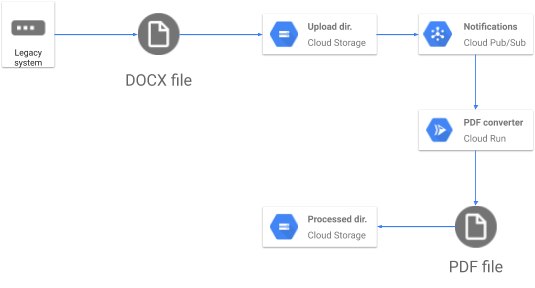

Architecture

In this lab you will assist the Pet Theory Veterinary practice to automatically convert their invoices into PDFs so that customers can open them reliably.

Using Google APIs

During this lab you will use Google APIs. The following APIs have been enabled for you:

| Name | API |

|---|---|

| Cloud Build | cloudbuild.googleapis.com |

| Cloud Storage | storage-component.googleapis.com |

| Cloud Run | run.googleapis.com |

Task 1. Get the source code

Get started by downloading the code necessary for this lab.

-

Activate your lab account:

gcloud auth list --filter=status:ACTIVE --format="value(account)" -

Run the following to clone the Pet Theory repository:

git clone https://github.com/Deleplace/pet-theory.git -

Move to the correct directory:

cd pet-theory/lab03

Task 2. Creating an invoice microservice

In this section you will create a Go application to process requests. As outlined in the architecture diagram, you will integrate Cloud Storage as part of the solution.

-

Click the Open Editor icon and then click Open in a new window.

-

Navigate to pet-theory > lab03 > server.go

-

Open the

server.gosource code and edit it to match the text below:package main import ( "fmt" "io/ioutil" "log" "net/http" "os" "os/exec" "regexp" "strings" ) func main() { http.HandleFunc("/", process) port := os.Getenv("PORT") if port == "" { port = "8080" log.Printf("Defaulting to port %s", port) } log.Printf("Listening on port %s", port) err := http.ListenAndServe(fmt.Sprintf(":%s", port), nil) log.Fatal(err) } func process(w http.ResponseWriter, r *http.Request) { log.Println("Serving request") if r.Method == "GET" { fmt.Fprintln(w, "Ready to process POST requests from Cloud Storage trigger") return } // // Read request body containing Cloud Storage object metadata // gcsInputFile, err1 := readBody(r) if err1 != nil { log.Printf("Error reading POST data: %v", err1) w.WriteHeader(http.StatusBadRequest) fmt.Fprintf(w, "Problem with POST data: %v \n", err1) return } // // Working directory (concurrency-safe) // localDir, errDir := ioutil.TempDir("", "") if errDir != nil { log.Printf("Error creating local temp dir: %v", errDir) w.WriteHeader(http.StatusInternalServerError) fmt.Fprintf(w, "Could not create a temp directory on server. \n") return } defer os.RemoveAll(localDir) // // Download input file from Cloud Storage // localInputFile, err2 := download(gcsInputFile, localDir) if err2 != nil { log.Printf("Error downloading Cloud Storage file [%s] from bucket [%s]: %v", gcsInputFile.Name, gcsInputFile.Bucket, err2) w.WriteHeader(http.StatusInternalServerError) fmt.Fprintf(w, "Error downloading Cloud Storage file [%s] from bucket [%s]", gcsInputFile.Name, gcsInputFile.Bucket) return } // // Use LibreOffice to convert local input file to local PDF file. // localPDFFilePath, err3 := convertToPDF(localInputFile.Name(), localDir) if err3 != nil { log.Printf("Error converting to PDF: %v", err3) w.WriteHeader(http.StatusInternalServerError) fmt.Fprintf(w, "Error converting to PDF.") return } // // Upload the freshly generated PDF to Cloud Storage // targetBucket := os.Getenv("PDF_BUCKET") err4 := upload(localPDFFilePath, targetBucket) if err4 != nil { log.Printf("Error uploading PDF file to bucket [%s]: %v", targetBucket, err4) w.WriteHeader(http.StatusInternalServerError) fmt.Fprintf(w, "Error downloading Cloud Storage file [%s] from bucket [%s]", gcsInputFile.Name, gcsInputFile.Bucket) return } // // Delete the original input file from Cloud Storage. // err5 := deleteGCSFile(gcsInputFile.Bucket, gcsInputFile.Name) if err5 != nil { log.Printf("Error deleting file [%s] from bucket [%s]: %v", gcsInputFile.Name, gcsInputFile.Bucket, err5) // This is not a blocking error. // The PDF was successfully generated and uploaded. } log.Println("Successfully produced PDF") fmt.Fprintln(w, "Successfully produced PDF") } func convertToPDF(localFilePath string, localDir string) (resultFilePath string, err error) { log.Printf("Converting [%s] to PDF", localFilePath) cmd := exec.Command("libreoffice", "--headless", "--convert-to", "pdf", "--outdir", localDir, localFilePath) cmd.Stdout, cmd.Stderr = os.Stdout, os.Stderr log.Println(cmd) err = cmd.Run() if err != nil { return "", err } pdfFilePath := regexp.MustCompile(`\.\w+$`).ReplaceAllString(localFilePath, ".pdf") if !strings.HasSuffix(pdfFilePath, ".pdf") { pdfFilePath += ".pdf" } log.Printf("Converted %s to %s", localFilePath, pdfFilePath) return pdfFilePath, nil } -

Now run the following to build the application:

go build -o server Expected Output:

go: downloading cloud.google.com/go/storage v1.6.0 go: downloading cloud.google.com/go v0.53.0 go: downloading github.com/googleapis/gax-go/v2 v2.0.5 go: downloading google.golang.org/api v0.18.0 go: downloading google.golang.org/genproto v0.0.0-20200224152610-e50cd9704f63 go: downloading google.golang.org/grpc v1.27.1 go: downloading go.opencensus.io v0.22.3 go: downloading golang.org/x/oauth2 v0.0.0-20200107190931-bf48bf16ab8d go: downloading github.com/golang/protobuf v1.3.3 go: downloading golang.org/x/net v0.0.0-20200222125558-5a598a2470a0 go: downloading github.com/golang/groupcache v0.0.0-20200121045136-8c9f03a8e57e go: downloading golang.org/x/sys v0.0.0-20200223170610-d5e6a3e2c0ae go: downloading golang.org/x/text v0.3.2 The functions called by this top-level code are in source files:

- server.go

- notification.go

- gcs.go

With the application has been successfully built, you can create the pdf-conversion service.

Task 3. Create a pdf-conversion service

The PDF service will use Cloud Run and Cloud Storage to initiate a process each time a file is uploaded to the designated storage.

To achieve this you will use a common pattern of event notifications together with Cloud Pub/Sub. Doing this enables the application to concentrate only on processing information. Transporting and passing information is performed by other services, which allows you to keep the application simple.

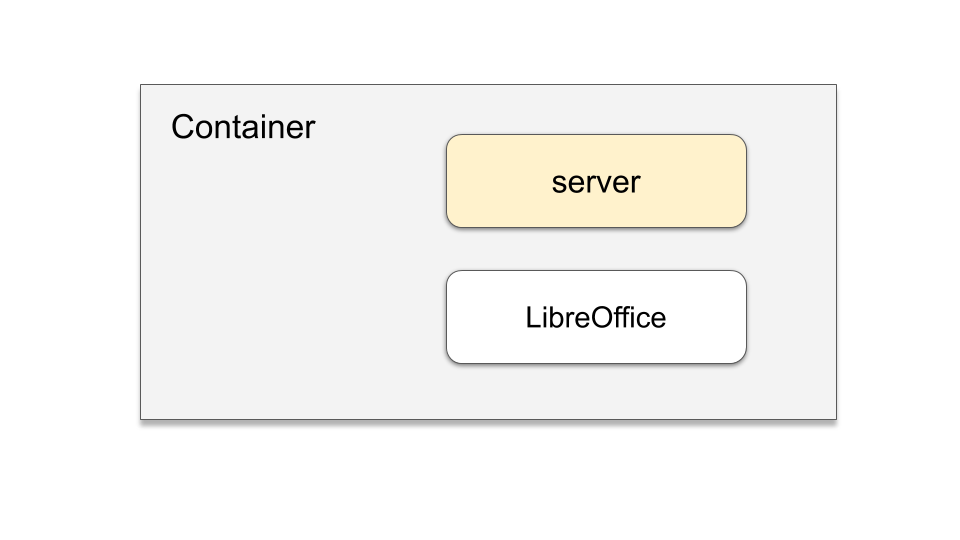

Building the invoice module requires the integration of two components:

Adding the LibreOffice package means it can be used in your application.

-

In the Open editor, Open the existing

Dockerfilemanifest and update the file as shown below:FROM amd64/debian RUN apt-get update -y \ && apt-get install -y libreoffice \ && apt-get clean WORKDIR /usr/src/app COPY server . CMD [ "./server" ] -

Save the updated

Dockerfile. -

Initiate a rebuild of the

pdf-converterimage using Cloud Build:gcloud builds submit \ --tag gcr.io/$GOOGLE_CLOUD_PROJECT/pdf-converter Click Check my progress to verify that you've performed the above task.

Build an image with Cloud Build -

Deploy the updated pdf-converter service.

Note: It's a good idea to give LibreOffice 2GB of RAM to work with, see the line with the --memoryoption. -

Run these commands to build the container and to deploy it:

gcloud run deploy pdf-converter \ --image gcr.io/$GOOGLE_CLOUD_PROJECT/pdf-converter \ --platform managed \ --region {{{ project_0.default_region | "REGION" }}} \ --memory=2Gi \ --no-allow-unauthenticated \ --set-env-vars PDF_BUCKET=$GOOGLE_CLOUD_PROJECT-processed \ --max-instances=3 Click Check my progress to verify that you've performed the above task.

PDF Converter service deployed

The Cloud Run service has now been successfully deployed. However we deployed an application that requires the correct permissions to access it.

Task 4. Create a Service Account

A Service Account is a special type of account with access to Google APIs.

In this lab uses a Service Account to access Cloud Run when a Cloud Storage event is processed. Cloud Storage supports a rich set of notifications that can be used to trigger events.

Next, update the code to notify the application when a file has been uploaded.

-

Click the Navigation menu > Cloud Storage, and verify that two buckets have been created. You should see:

-

-processed -

-upload

-

-

Create a Pub/Sub notification to indicate a new file has been uploaded to the docs bucket ("uploaded"). The notifications will be labeled with the topic "new-doc".

gsutil notification create -t new-doc -f json -e OBJECT_FINALIZE gs://$GOOGLE_CLOUD_PROJECT-upload Expected Output:

Created Cloud Pub/Sub topic projects/{{{project_0.project_id | "PROJECT_ID"}}}/topics/new-doc Created notification config projects/_/buckets/{{{project_0.project_id | "PROJECT_ID"}}}-upload/notificationConfigs/1 -

Create a new service account to trigger the Cloud Run services:

gcloud iam service-accounts create pubsub-cloud-run-invoker --display-name "PubSub Cloud Run Invoker" Expected Output:

Created service account [pubsub-cloud-run-invoker]. -

Give the service account permission to invoke the PDF converter service:

gcloud run services add-iam-policy-binding pdf-converter \ --member=serviceAccount:pubsub-cloud-run-invoker@$GOOGLE_CLOUD_PROJECT.iam.gserviceaccount.com \ --role=roles/run.invoker \ --region {{{ project_0.default_region | "REGION" }}} \ --platform managed Expected Output:

Updated IAM policy for service [pdf-converter]. bindings: - members: - serviceAccount:pubsub-cloud-run-invoker@{{{project_0.project_id | "PROJECT_ID"}}}.iam.gserviceaccount.com role: roles/run.invoker etag: BwYYfbXS240= version: 1 -

Find your project number by running this command:

PROJECT_NUMBER=$(gcloud projects list \ --format="value(PROJECT_NUMBER)" \ --filter="$GOOGLE_CLOUD_PROJECT") -

Enable your project to create Cloud Pub/Sub authentication tokens:

gcloud projects add-iam-policy-binding $GOOGLE_CLOUD_PROJECT \ --member=serviceAccount:{{{ project_0.project_id | "PROJECT_ID" }}}@{{{ project_0.project_id | "PROJECT_ID" }}}.iam.gserviceaccount.com \ --role=roles/iam.serviceAccountTokenCreator Click Check my progress to verify that you've performed the above task.

Service Account created

With the Service Account created it can be used to invoke the Cloud Run Service.

Task 5. Testing the Cloud Run service

Before progressing further, test the deployed service. Remember the service requires authentication, so test that to ensure it is actually private.

-

Save the URL of your service in the environment variable $SERVICE_URL:

SERVICE_URL=$(gcloud run services describe pdf-converter \ --platform managed \ --region {{{ project_0.default_region | "REGION" }}} \ --format "value(status.url)") -

Display the SERVICE URL:

echo $SERVICE_URL -

Make an anonymous GET request to your new service:

curl -X GET $SERVICE_URL Expected Output:

<html><head> <meta http-equiv="content-type" content="text/html;charset=utf-8"> <title>403 Forbidden</title> </head> <body text=#000000 bgcolor=#ffffff> <h1>Error: Forbidden</h1> <h2>Your client does not have permission to get URL <code>/</code> from this server.</h2> <h2></h2> NOTE: The anonymous GET request will result in an error message: "Your client does not have permission to get URL". This is good; you don't want the service to be callable by anonymous users. -

Now try invoking the service as an authorized user:

curl -X GET -H "Authorization: Bearer $(gcloud auth print-identity-token)" $SERVICE_URL Expected Output:

Ready to process POST requests from Cloud Storage trigger

Great work, you have successfully deployed an authenticated Cloud Run service.

Task 6. Cloud Storage trigger

To initiate a notification when new content is uploaded to Cloud Storage, add a subscription to your existing Pub/Sub Topic.

-

Create a Pub/Sub subscription so that the PDF converter will be run whenever a message is published to the topic

new-doc:gcloud pubsub subscriptions create pdf-conv-sub \ --topic new-doc \ --push-endpoint=$SERVICE_URL \ --push-auth-service-account=pubsub-cloud-run-invoker@$GOOGLE_CLOUD_PROJECT.iam.gserviceaccount.com Expected Output:

Created subscription [projects/{{{ project_0.project_id| "PROJECT_ID" }}}/subscriptions/pdf-conv-sub]. Click Check my progress to verify that you've performed the above task.

Confirm Pub/Sub Subscription

Now whenever a file is uploaded the Pub/Sub subscription will interact with your Service Account. The Service Account will then initiate your PDF Converter Cloud Run service.

Task 7. Testing Cloud Storage notification

To test the Cloud Run service, use the example files available.

-

Copy the test files into your upload bucket:

gsutil -m cp -r gs://spls/gsp762/* gs://$GOOGLE_CLOUD_PROJECT-upload Expected Output:

Copying gs://spls/gsp762/cat-and-mouse.jpg [Content-Type=image/jpeg]... Copying gs://spls/gsp762/file-sample_100kB.doc [Content-Type=application/msword]... Copying gs://spls/gsp762/file-sample_500kB.docx [Content-Type=application/vnd.openxmlformats-officedocument.wordprocessingml.document]... Copying gs://spls/gsp762/file_example_XLS_10.xls [Content-Type=application/vnd.ms-excel]... Copying gs://spls/gsp762/file-sample_1MB.docx [Content-Type=application/vnd.openxmlformats-officedocument.wordprocessingml.document]... Copying gs://spls/gsp762/file_example_XLSX_50.xlsx [Content-Type=application/vnd.openxmlformats-officedocument.spreadsheetml.sheet]... Copying gs://spls/gsp762/file_example_XLS_100.xls [Content-Type=application/vnd.ms-excel]... Copying gs://spls/gsp762/file_example_XLS_50.xls [Content-Type=application/vnd.ms-excel]... Copying gs://spls/gsp762//Copy of cat-and-mouse.jpg [Content-Type=image/jpeg]... -

In the Cloud Console, click Cloud Storage > Buckets followed by the bucket name whose name ends in "

-upload" -

Click the Refresh button a few times and see how the files are deleted, one by one, as they are converted to PDFs.

-

Then click Buckets, followed by the bucket whose name ends in "

-processed". It should contain PDF versions of all files. NOTE: It can take a few minutes for the processing of the files. Use the Bucket refresh option to check the processing completion state. -

Feel free to open the PDF files to make sure they were properly converted.

-

Once the upload is done, click Navigation menu > Cloud Run and click on the pdf-converter service.

-

Select the LOGS tab and add a filter of "Converting" to see the converted files.

-

Navigate to Navigation menu > Cloud Storage and open the bucket name ending in "

-upload" to confirm all files uploaded have been processed.

Excellent work, you have successfully built a new service to create a PDF using files uploaded to Cloud Storage.

Congratulations!

In this lab, you've learned how to convert a Go application into a container, learned how to construct containers utilizing Google Cloud Build, and launched a Cloud Run service.

You've also gained skills in enabling permissions through a Service Account and leveraging Cloud Storage event processing, all of which are integral to the operation of the pdf-converter service that transforms documents into PDFs and stores them in the "processed" bucket.

Google Cloud training and certification

...helps you make the most of Google Cloud technologies. Our classes include technical skills and best practices to help you get up to speed quickly and continue your learning journey. We offer fundamental to advanced level training, with on-demand, live, and virtual options to suit your busy schedule. Certifications help you validate and prove your skill and expertise in Google Cloud technologies.

Manual Last Updated May 15, 2024

Lab Last Tested May 15, 2024

Copyright 2024 Google LLC All rights reserved. Google and the Google logo are trademarks of Google LLC. All other company and product names may be trademarks of the respective companies with which they are associated.