Before you begin

- Labs create a Google Cloud project and resources for a fixed time

- Labs have a time limit and no pause feature. If you end the lab, you'll have to restart from the beginning.

- On the top left of your screen, click Start lab to begin

Create the custom network

/ 15

Create a subnet for the custom network in us-west1 region

/ 15

Create the firewall rule in the custom network

/ 10

Create the web server in the custom network

/ 20

Install Apache in web server

/ 20

Export the network traffic to BigQuery

/ 20

In this lab, you will learn how to configure a network to record traffic to and from an Apache web server using VPC Flow Logs. You will then export the logs to BigQuery for analysis.

There are multiple use cases for VPC Flow Logs. For example, you might use VPC Flow Logs to determine where your applications are being accessed from to optimize network traffic expense, to create HTTP Load Balancers to balance traffic globally, or to denylist unwanted IP addresses with Cloud Armor.

In this lab, you will learn how to perform the following tasks:

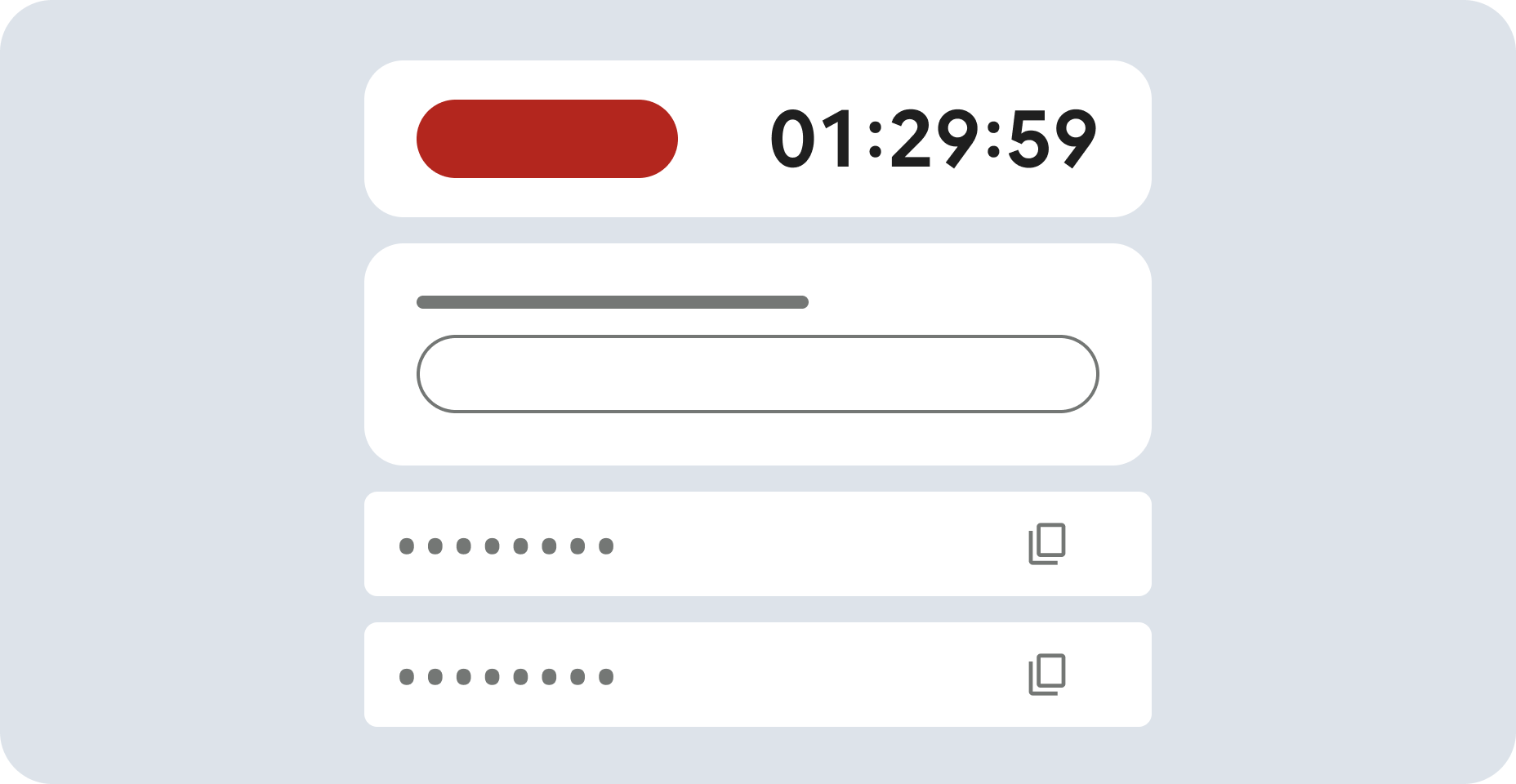

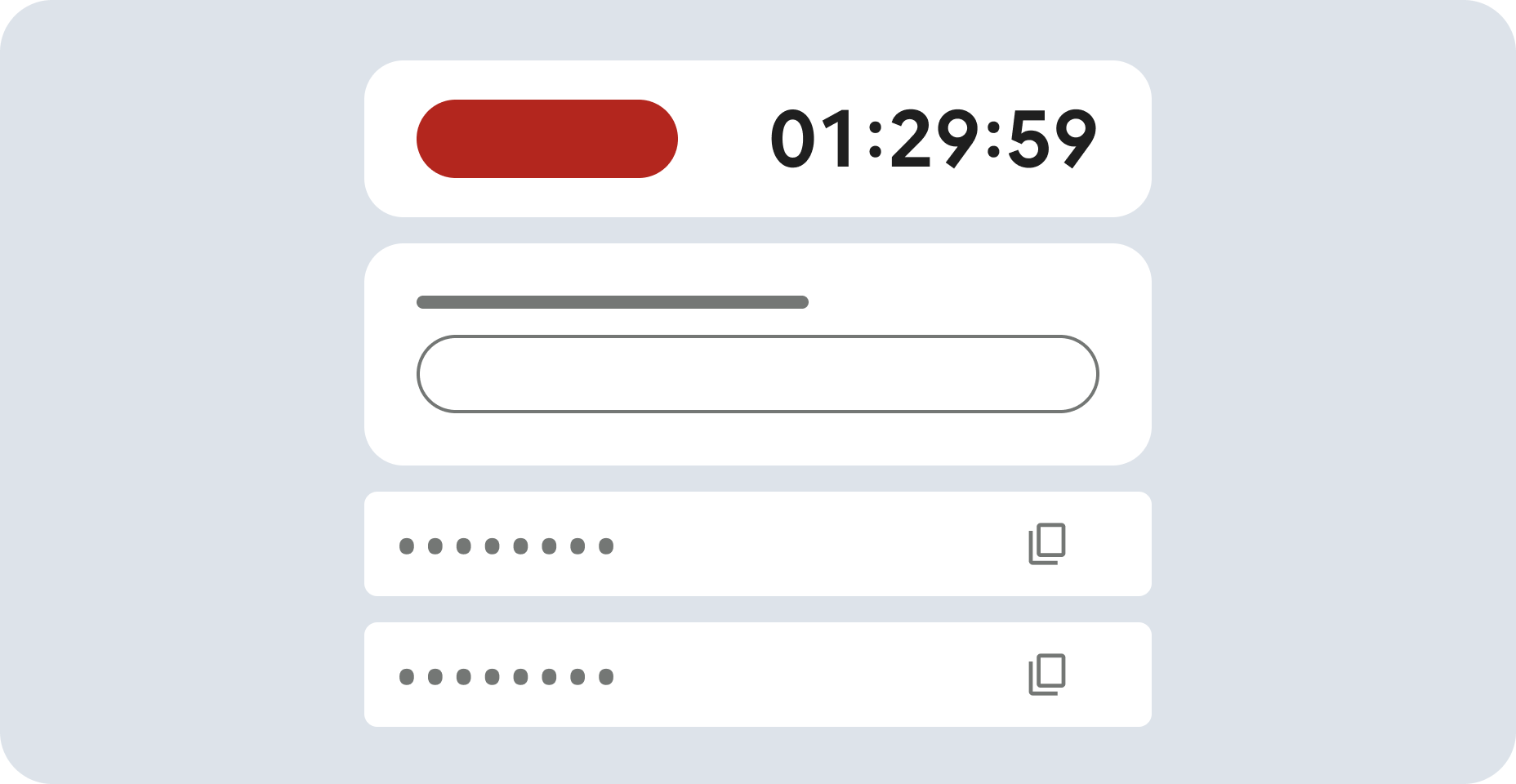

Read these instructions. Labs are timed and you cannot pause them. The timer, which starts when you click Start Lab, shows how long Google Cloud resources are made available to you.

This hands-on lab lets you do the lab activities in a real cloud environment, not in a simulation or demo environment. It does so by giving you new, temporary credentials you use to sign in and access Google Cloud for the duration of the lab.

To complete this lab, you need:

Click the Start Lab button. If you need to pay for the lab, a dialog opens for you to select your payment method. On the left is the Lab Details pane with the following:

Click Open Google Cloud console (or right-click and select Open Link in Incognito Window if you are running the Chrome browser).

The lab spins up resources, and then opens another tab that shows the Sign in page.

Tip: Arrange the tabs in separate windows, side-by-side.

If necessary, copy the Username below and paste it into the Sign in dialog.

You can also find the Username in the Lab Details pane.

Click Next.

Copy the Password below and paste it into the Welcome dialog.

You can also find the Password in the Lab Details pane.

Click Next.

Click through the subsequent pages:

After a few moments, the Google Cloud console opens in this tab.

Cloud Shell is a virtual machine that is loaded with development tools. It offers a persistent 5GB home directory and runs on the Google Cloud. Cloud Shell provides command-line access to your Google Cloud resources.

Click Activate Cloud Shell

Click through the following windows:

When you are connected, you are already authenticated, and the project is set to your Project_ID,

gcloud is the command-line tool for Google Cloud. It comes pre-installed on Cloud Shell and supports tab-completion.

Output:

Output:

gcloud, in Google Cloud, refer to the gcloud CLI overview guide.

By default, VPC Flow Logs are disabled for a network. Therefore, you will create a new custom-mode network and enable VPC Flow Logs.

In the Console, navigate to Navigation menu (

Click Create VPC Network.

Set the following values, leave all others at their defaults:

| Property | Value (type value or select option as specified) |

|---|---|

| Name | vpc-net |

| Description | Enter an optional description |

For Subnet creation mode, click Custom.

Set the following values, leave all others at their defaults:

| Property | Value (type value or select option as specified) |

|---|---|

| Name | vpc-subnet |

| Region | |

| IPv4 range | 10.1.3.0/24 |

| Flow Logs | On |

Click Done, and then click Create.

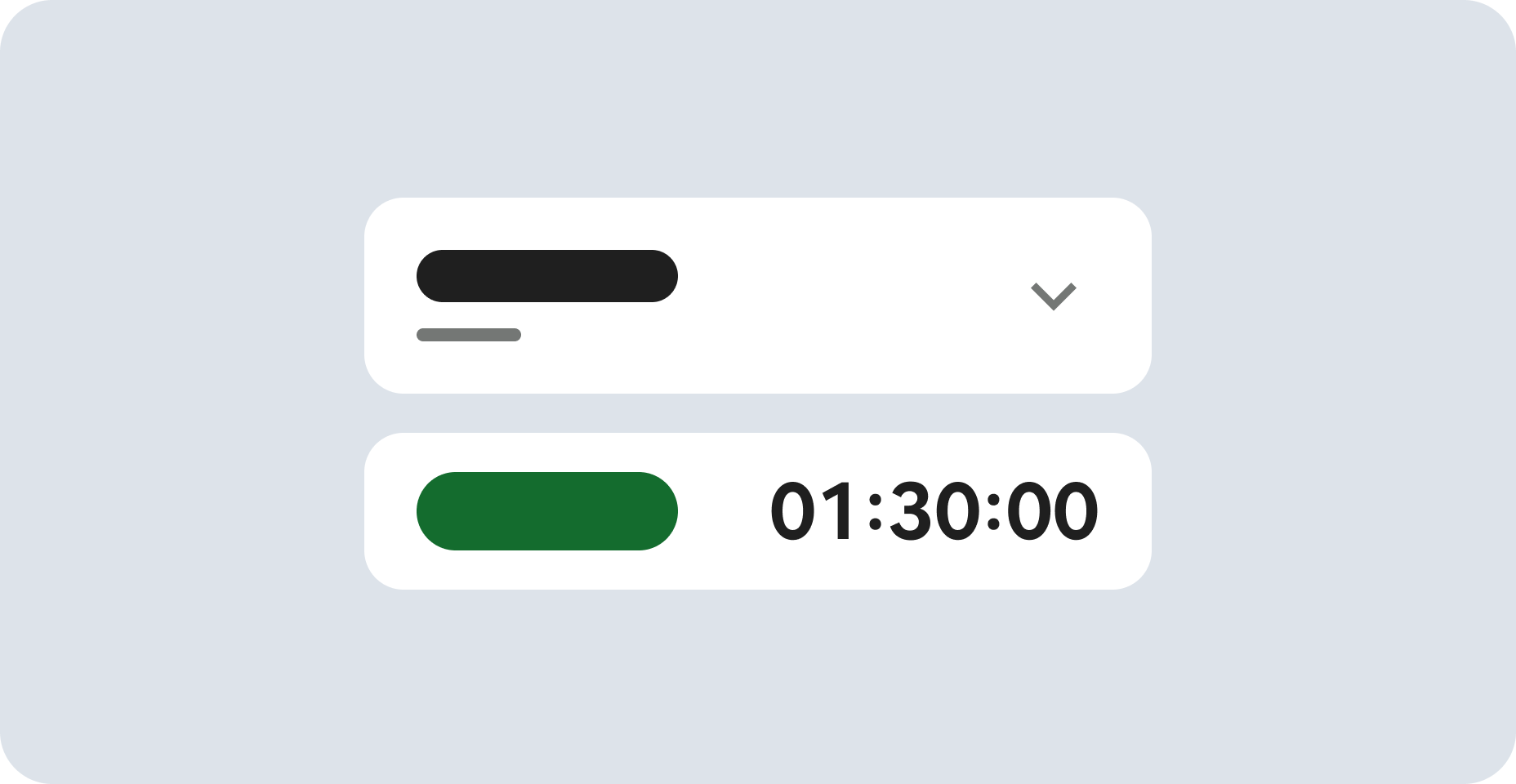

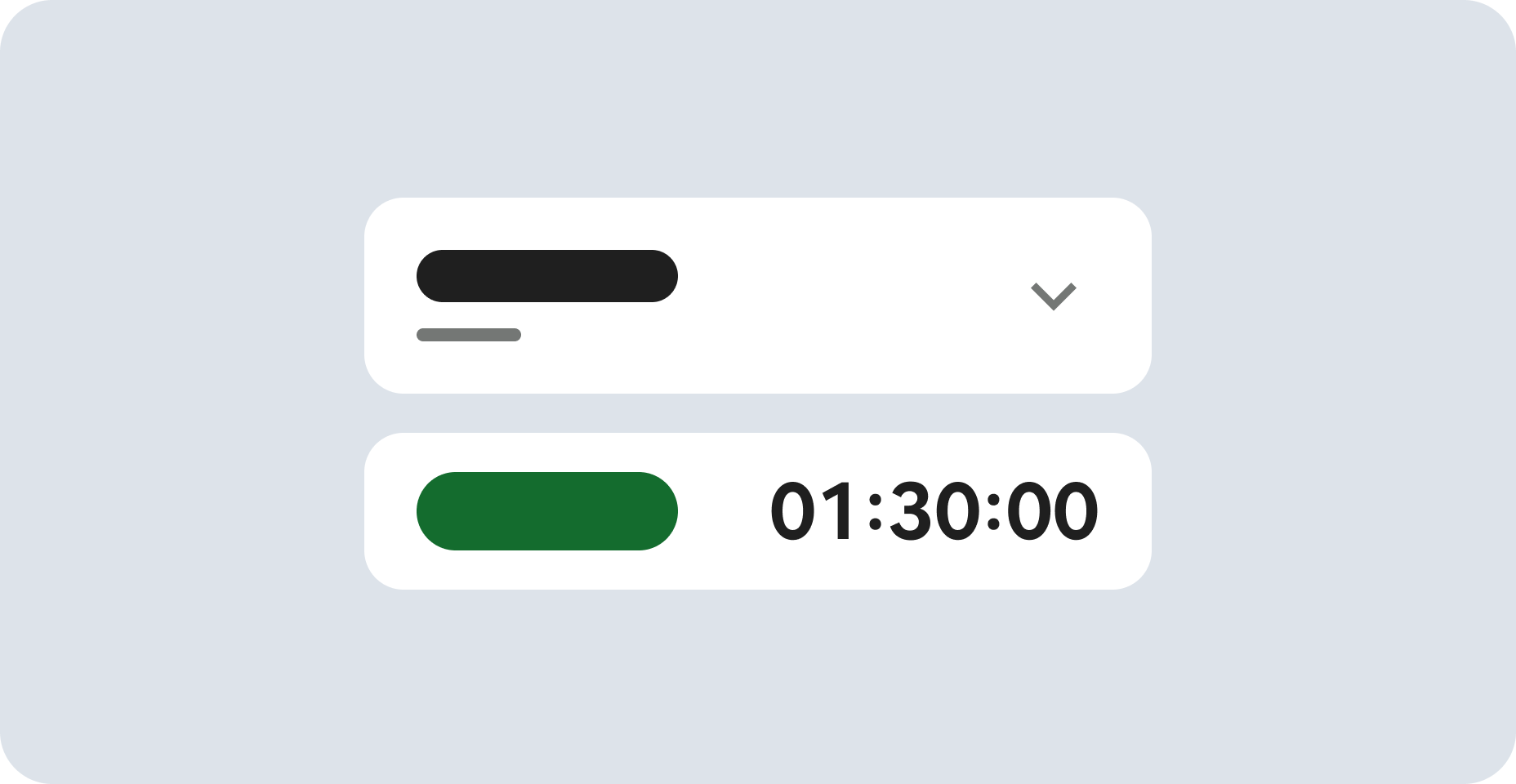

Click Check my progress to verify your performed task. If you have completed the task successfully you will granted with an assessment score.

In order to serve HTTP and SSH traffic on the network, you need to create a firewall rule.

In the left menu, click Firewall.

Click Create Firewall Rule.

Set the following values, leave all others at their defaults:

| Property | Value (type value or select option as specified) |

|---|---|

| Name | allow-http-ssh |

| Network | vpc-net |

| Targets | Specified target tags |

| Target tags | http-server |

| Source filter | IPv4 ranges |

| Source IPv4 ranges | 0.0.0.0/0 |

| Protocols and ports | Specified protocols and ports, and then check tcp, type: 80, 22 |

Click Check my progress to verify your performed task. If you have completed the task successfully you will granted with an assessment score.

In the Cloud console, on the Navigation menu (☰), click Compute Engine > VM Instances, then click Create instance.

In the Machine configuration

Enter the values for the following fields:

| Field | Value |

|---|---|

| Name | web-server |

| Region | |

| Zone | |

| Series | E2 |

| Machine Type | e2-micro (2 vCPU, 1GB memory) |

Click Networking

default to edit.

vpc-net

vpc-subnet

Once all sections are configured, scroll down and click Create to launch your new virtual machine instance.

Wait a couple of minutes, you'll see a green check when the instance has launched.

Click Check my progress to verify your performed task. If you have completed the task successfully you will granted with an assessment score.

Configure the VM instance that you created as an Apache webserver and overwrite the default web page.

) > Compute Engine > VM instances).

For web-server, click SSH to launch a terminal and connect.

) > Compute Engine > VM instances).

For web-server, click SSH to launch a terminal and connect.Click Check my progress to verify your performed task. If you have completed the task successfully you will granted with an assessment score.

) > Compute Engine > VM instances).

) > Compute Engine > VM instances).Find the IP address of the computer you are using. One easy way to do this is to go to a website that provides this address.

YOUR_IP_ADDRESS.In the Console, navigate to Navigation menu > View All Products > Logging > Logs Explorer.

In the Log fields panel, under Resource type, click Subnetwork. In the Query results pane, entries from the subnetwork logs appear.

In the Log fields panel, under Log name, click compute.googleapis.com/vpc_flows.

Enter "YOUR_IP_ADDRESS" in the Query search box at the top. Then Click Run Query.

Feel free to explore other fields within the log entry before moving to the next task.

In the Console, in the left pane, click Logs Explorer.

From All resources dropdown, select Subnetwork. Then click Apply.

From All log names dropdown, check vpc_flows and click Apply. Then, click Run query.

Click More Actions > Create Sink.

For "Sink Name", type vpc-flows and click NEXT.

For "Select sink service", select the BigQuery dataset.

For "Sink Destination", select Create new BigQuery dataset.

For "Dataset ID", type bq_vpc_flows, and then click CREATE DATASET.

Click CREATE SINK. The Logs Router Sinks page appears. You should be able to see the sink you created (vpc-flows). If you are unable to see the sink click on Logs Router.

Now that the network traffic logs are exported to BigQuery, generate more traffic by accessing the web-server several times. Using Cloud Shell, you can curl the IP Address of the web-server several times.

) > Compute Engine > VM instances.

) > Compute Engine > VM instances.EXTERNAL_IP.EXTERNAL_IP in an environment variable. Replace the <EXTERNAL_IP> with the address you just noted:Click Check my progress to verify your performed task. If you have completed the task successfully you will granted with an assessment score.

The Welcome to BigQuery in the Cloud Console message box opens. This message box provides a link to the quickstart guide and the release notes.

The BigQuery console opens.

Click on Details tab.

Copy the Table ID provided in the Details tab.

Add the following to the Query Editor and replace your_table_id with TABLE_ID while leaving the accents (`) on both sides:

The previous query gave you the same information that you saw in the Cloud Console. Now change the query to identify the top IP addresses that have exchanged traffic with your web-server.

TABLE_ID while leaving the accents (`) on both sides:Feel free to generate more traffic to the web-server from multiple sources and query the table again to determine the bytes sent to the server.

You will now explore a new release of VPC flow log volume reduction. Not every packet is captured into its own log record. However, even with sampling, log record captures can be quite large.

You can balance your traffic visibility and storage cost needs by adjusting specific aspects of logs collection, which you will explore in this section.

In the Console, navigate to the Navigation menu (

Click Enable Network Management API.

Click the Add VPC flow logs configuration button, then under Subnets click Add a configuration subnets.

On the Subnets in current project tab, in VPC networks, check vpc-net.

Click the checkbox next to vpc-subnet subnet and click Manage flow logs > Add new configuration.

Under Configurations - Subnets (Compute Engine API), click the drop-down Compute Engine API configuration.

Click Advanced Settings.

The purpose of each field is explained below:

Aggregation time interval: Sampled packets for a time interval are aggregated into a single log entry. This time interval can be 5 sec (default), 30 sec, 1 min, 5 min, 10 min, or 15 min.

Metadata annotations: By default, flow log entries are annotated with metadata information, such as the names of the source and destination VMs or the geographic region of external sources and destinations. This metadata annotation can be turned off to save storage space.

Log entry sampling: Before being written to the database, the number of logs can be sampled to reduce their number. By default, the log entry volume is scaled by 0.50 (50%), which means that half of entries are kept. You can set this from 1.0 (100%, all log entries are kept) to 0.0 (0%, no logs are kept).

Set the Aggregation Interval to 30 seconds.

Set the Sample rate to 25%.

Click Done and then click Save.

Setting the aggregation level to 30 seconds can reduce your flow logs size by up to 83% compared to the default aggregation interval of 5 seconds. Configuring your flow log aggregation can seriously affect your traffic visibility and storage costs.

You have configured a VPC network, enabled VPC Flow Logs and created a webserver in that network. Then, you generated HTTP traffic to the webserver, viewed the traffic logs in the Cloud Console and analyzed the traffic logs in BigQuery.

For information on the basic concepts of Google Cloud Identity and Access Management:

...helps you make the most of Google Cloud technologies. Our classes include technical skills and best practices to help you get up to speed quickly and continue your learning journey. We offer fundamental to advanced level training, with on-demand, live, and virtual options to suit your busy schedule. Certifications help you validate and prove your skill and expertise in Google Cloud technologies.

Manual last updated August 13, 2025

Lab last tested August 13, 2025

Copyright 2025 Google LLC. All rights reserved. Google and the Google logo are trademarks of Google LLC. All other company and product names may be trademarks of the respective companies with which they are associated.

This content is not currently available

We will notify you via email when it becomes available

Great!

We will contact you via email if it becomes available

One lab at a time

Confirm to end all existing labs and start this one